FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

The Power BI Data Visualization World Championships is back! Get ahead of the game and start preparing now! Learn more

- Power BI forums

- Issues

- Issues

- PowerQuery - Validating queries when saving is tak...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

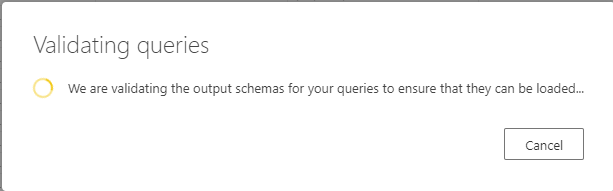

PowerQuery - Validating queries when saving is taking excessively long time

I'm struggling to be able to work efficiently with Dataflows as I am experiencing excessive times to 'validate' queries after pressing the 'Save & Close' button. For entities with even a minimal amount of transformation (eg. simply changing some column data types) I've experienced wait times of over 3 hours to simply save the query to exit out back to the main entity menu/view. Should things be taking this long for an output schema validation process, regardless of the size of the underlying table data? Is this an issue being experienced by others as well?

This is making it simply impossible for me to work with the Dataflows product in this way.

- « Previous

-

- 1

- 2

- 3

- …

- 10

- Next »

- « Previous

-

- 1

- 2

- 3

- …

- 10

- Next »

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- jake18 on: Fix High Vulnerabilities found in On-Prem Data Gat...

- BI_Tiffin on: Power BI Azure Map - Connecticut Geocoding Ambigui...

-

acig

on:

Issue with new card visual - reference labels spac...

on:

Issue with new card visual - reference labels spac...

- catsamson on: Issues with new card visual displaying an URL imag...

- Shackleton on: Image in New Card Visual (incorrect size)

-

mattlee

on:

Issue with new card visual after publishing to PBI...

mattlee

on:

Issue with new card visual after publishing to PBI...

- tejaswi_464 on: DataFormat.Error: There were more columns in the r...

- SylvLav on: Issue with Card Visual Layout After November Power...

- Murzao on: Bug Report: Unable to send dataflow refresh failur...

- mb123_ on: Bug in sorting - Gantt 3.4.2.0 from Microsoft

- New 8,225

- Needs Info 3,502

- Investigating 3,602

- Accepted 2,089

- Declined 38

- Delivered 3,976

-

Reports

10,343 -

Data Modeling

4,189 -

Dashboards

4,145 -

Gateways

2,128 -

Report Server

2,126 -

APIS and Embedding

1,980 -

Custom Visuals

1,807 -

Content Packs

528 -

Mobile

355 -

Need Help

11 -

General Comment

6 -

Show and Tell

3 -

Tips and Tricks

2 -

Power BI Desktop

1