Fabric Data Days starts November 4th!

Advance your Data & AI career with 50 days of live learning, dataviz contests, hands-on challenges, study groups & certifications and more!

Get registered- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Get Fabric Certified for FREE during Fabric Data Days. Don't miss your chance! Request now

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Calculate percentage for different columns and gro...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Calculate percentage for different columns and groups

Hello,

Im sure there already is an answer here to solve my problem, but I haven't been able to find it. If there already is a thread explaining the same problem feel free to link to that thread.

In my data I have a timestamp column with YYYY-MM-DD hh:mm:ss

I then have diffent columns to be used for grouping based on group of the timestamp.

Like this:

| Timestamp | Hour | 30min | 5min | BusyOccupied30 | BusyOccupied5 |

| 2017-02-23 08:00:00 | 8 | 0 | 0 | 1 | 1 |

| 2017-02-23 08:05:00 | 8 | 0 | 5 | 1 | |

| 2017-02-23 08:10:00 | 8 | 0 | 10 | 1 | 1 |

| 2017-02-23 08:15:00 | 8 | 0 | 15 | 1 | 1 |

| 2017-02-23 08:20:00 | 8 | 0 | 20 | 1 | |

| 2017-02-23 08:25:00 | 8 | 0 | 25 | 1 | |

| 2017-02-23 08:30:00 | 8 | 30 | 30 | ||

| 2017-02-23 08:35:00 | 8 | 30 | 35 | 1 | |

| 2017-02-23 08:40:00 | 8 | 30 | 40 | 1 | |

| 2017-02-23 08:45:00 | 8 | 30 | 45 | ||

| 2017-02-23 08:50:00 | 8 | 30 | 50 | 1 | 1 |

| 2017-02-23 08:55:00 | 8 | 30 | 55 | 1 | |

| 2017-02-23 09:00:00 | 9 | 0 | 0 | 1 | |

| 2017-02-23 09:05:00 | 9 | 0 | 5 | 1 | |

| 2017-02-23 09:10:00 | 9 | 0 | 10 | 1 | 1 |

| 2017-02-23 09:15:00 | 9 | 0 | 15 | ||

| 2017-02-23 09:20:00 | 9 | 0 | 20 | 1 | |

| 2017-02-23 09:25:00 | 9 | 0 | 25 | 1 | |

| 2017-02-23 09:30:00 | 9 | 30 | 30 | ||

| 2017-02-23 09:35:00 | 9 | 30 | 35 | ||

| 2017-02-23 09:40:00 | 9 | 30 | 40 | 1 | |

| 2017-02-23 09:45:00 | 9 | 30 | 45 | 1 | |

| 2017-02-23 09:50:00 | 9 | 30 | 50 | ||

| 2017-02-23 09:55:00 | 9 | 30 | 55 | 1 |

I need to calculate the the number of ones withing the groups of BusyOccupied30 AND BusyOccupied5.

Since my data is at the level of 5 min, there cannot be two ones within the same "5-min"-group for every hour. But there can by one or many ones within the same 30-min group.

What I want to achieve is to put the number of ones withing each group in relation to the number of maximum ons there can be withing that group.

For 5 min there can be a totalt of 24 ones.

For 30 min there can be a totalt of 4.

My diagram should be able to show 75% (3 out of 4) for busyoccupied30, and 58,3% for busyoccupied5.

I've been trying to play around with a measure using countdistinct, max, maxx but I can't get it to work... 😞

Regards,

Alfred

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @reddog,

Based on my understanding, we can group all the rows to 4 if we record ones in 30 min. And in the last group, there is no value in BusyOccupied30 column 2017-02-23 09:30:00 through 2017-02-23 09:55:00, the number is 3, so you want to calculate 3/4=75%, right? If it is, please review the following steps.

1. Create a calculated to identify the 30-min groups. Create rank column and get four 30-min groups based on rank column.

Rank = RANKX(Test,Test[Timestamp],,ASC) Group-30min = IF(Test[Rank]<=6,"Group1",IF(Test[Rank]<=12,"Group2",IF(Test[Rank]<=18,"Group3","Group4")))

2. Create a calculated column to identify if there is number in each group.

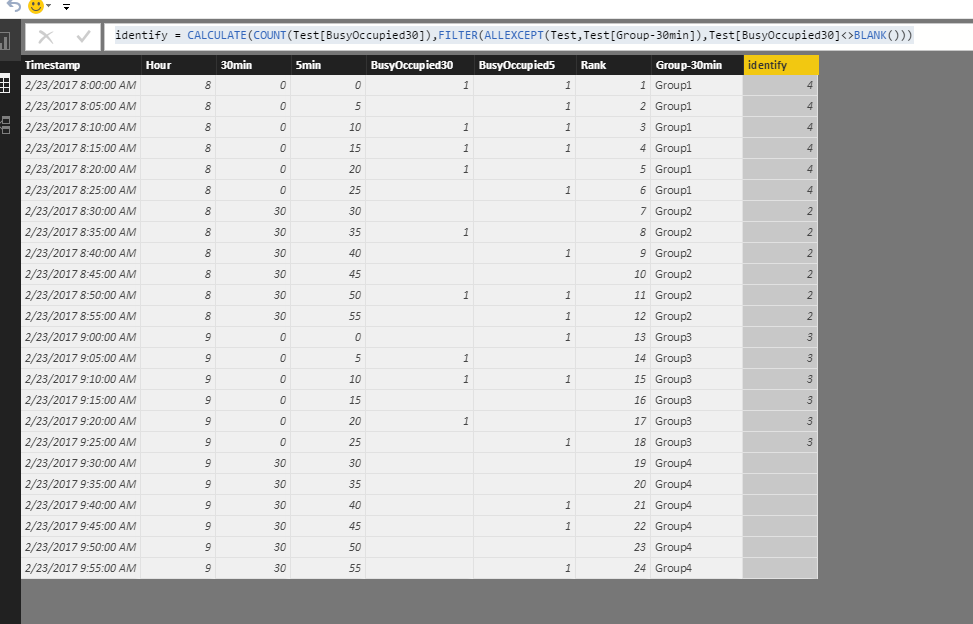

identify = CALCULATE(COUNT(Test[BusyOccupied30]),FILTER(ALLEXCEPT(Test,Test[Group-30min]),Test[BusyOccupied30]<>BLANK()))

Please see the three calculated column in the screenshot.

3. Create measure to calculate what you want.

For busyoccupied30 = DIVIDE(CALCULATE(DISTINCTCOUNT(Test[identify]),FILTER(Test,Test[identify]<>BLANK())),DISTINCTCOUNT(Test[Group-30min])) For busyoccupied5 = DIVIDE(CALCULATE(COUNTA(Test[BusyOccupied5]),FILTER(Test,Test[BusyOccupied5]<>BLANK())),COUNTROWS(Test))

4. Create a Muti-row card to display the result.

Please let me know if you have any issue.

Best Regards,

Angelia

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @reddog,

Based on my understanding, we can group all the rows to 4 if we record ones in 30 min. And in the last group, there is no value in BusyOccupied30 column 2017-02-23 09:30:00 through 2017-02-23 09:55:00, the number is 3, so you want to calculate 3/4=75%, right? If it is, please review the following steps.

1. Create a calculated to identify the 30-min groups. Create rank column and get four 30-min groups based on rank column.

Rank = RANKX(Test,Test[Timestamp],,ASC) Group-30min = IF(Test[Rank]<=6,"Group1",IF(Test[Rank]<=12,"Group2",IF(Test[Rank]<=18,"Group3","Group4")))

2. Create a calculated column to identify if there is number in each group.

identify = CALCULATE(COUNT(Test[BusyOccupied30]),FILTER(ALLEXCEPT(Test,Test[Group-30min]),Test[BusyOccupied30]<>BLANK()))

Please see the three calculated column in the screenshot.

3. Create measure to calculate what you want.

For busyoccupied30 = DIVIDE(CALCULATE(DISTINCTCOUNT(Test[identify]),FILTER(Test,Test[identify]<>BLANK())),DISTINCTCOUNT(Test[Group-30min])) For busyoccupied5 = DIVIDE(CALCULATE(COUNTA(Test[BusyOccupied5]),FILTER(Test,Test[BusyOccupied5]<>BLANK())),COUNTROWS(Test))

4. Create a Muti-row card to display the result.

Please let me know if you have any issue.

Best Regards,

Angelia

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you @v-huizhn-msft

I've marked your answer as sollution. However, I can't use it with my real data.

The data I have is telemetry data (from motion sensors), so I get thousands of samples each day. I need to calulate the total use of one sensor, a group of sensors or all sensor for a hole day, manydays and so on.

The smallest group of sensordata is in 5 min-intervall, as my exampled showed. And to be able to calulate this for all the different needs I have a column that says 1 if there has been a motion within that 5 min interval (or 0 if there hasn't been a motion within that interval). Thats why I have the column BusyOccupied5 in the example. In the database it's easy to achieve this when I group my data:

select max(busyoccupied)

from table

group by hour, 5min

But I don't know how I can achieve the same column busyoccupied30 so I get the max value from all the rows that is withing a 30-min-interval. That's why I posted the question here.

If I use your solution, I need to create a lot of "Group-30min"-columns. Groups for all the 30-min-intervals for 1 day, for office hours, for morning, afternoon and evening. And then the same for 10 and 15 min. That will be too time consuming (even if it's a one time job).

So if your solution were more dynamic when it came to the measure, not depending on a group-30min-column but instead "how many hours+30min-group are there" then it would be really good.

Thank you again for your help!

Regards,

Alfred

Helpful resources

Power BI Monthly Update - November 2025

Check out the November 2025 Power BI update to learn about new features.

Fabric Data Days

Advance your Data & AI career with 50 days of live learning, contests, hands-on challenges, study groups & certifications and more!

| User | Count |

|---|---|

| 98 | |

| 72 | |

| 50 | |

| 50 | |

| 43 |