Join us at FabCon Vienna from September 15-18, 2025

The ultimate Fabric, Power BI, SQL, and AI community-led learning event. Save €200 with code FABCOMM.

Get registered- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- Quick Measures Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Compete to become Power BI Data Viz World Champion! First round ends August 18th. Get started.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- [Data Fabric] Dataflow Gen2 Error: Unable to Retri...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

[Data Fabric] Dataflow Gen2 Error: Unable to Retrieve Data from Oracle for Large Date Range

Hello everyone,

I'm running some tests with Data Fabric to retrieve data from Oracle and send it to the Lakehouse. I subscribed to the F2 license for testing purposes.

Essentially, I create a Dataflow Gen2 pipeline to fetch a sales table from Oracle. This table has 200 million records and is over 120GB in size.

In my pipeline, when I set a filter to retrieve only 1 day of data from the table, the pipeline works fine. However, when I increase the filter to retrieve 1 month of data, it throws an error.

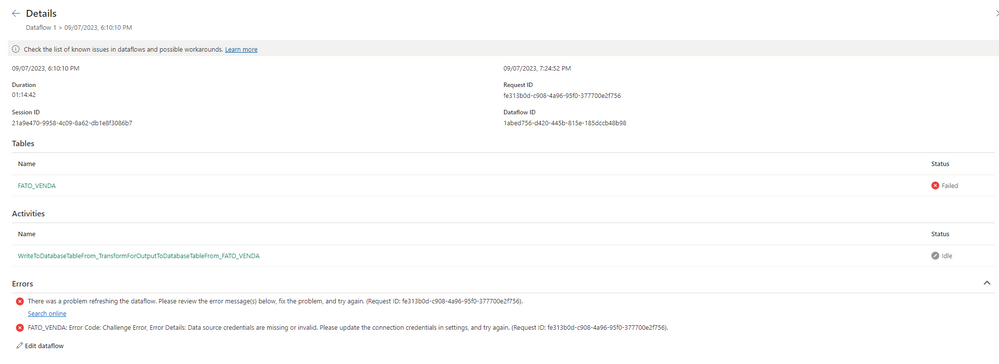

The errors I receive are:

- There was a problem refreshing the dataflow. Please review the error message(s) below, fix the problem, and try again. (Request ID: d4f7561f-b5ad-4fe1-a3ac-4ed534a59292).

-Error Code: Challenge Error, Error Details: Data source credentials are missing or invalid. Please update the connection credentials in settings, and try again. (Request ID: d4f7561f-b5ad-4fe1-a3ac-4ed534a59292).

The gateway I'm using is the On-premises data gateway version 3000.178.9 (June/23).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

F2 is likely (far) too small for that size of data. Back in the pre-Fabric days (ie the present) you would need a P4 SKU (at least).

It might be that your Oracle source has its own timeout enforcement.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

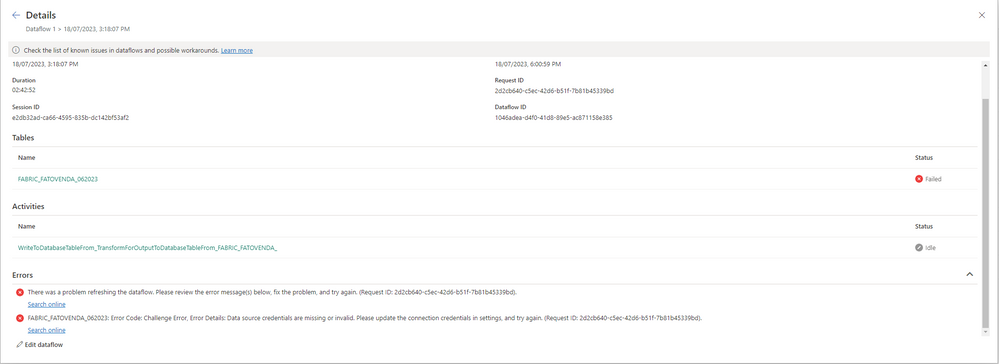

I upgraded to an F16 license and tested a 6GB table with 17 million rows, but I received the same error.

Note: I updated the gateway to the latest version (3000.182.4).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In the legacy days that size would require at least a P1 (F64)

Helpful resources

| User | Count |

|---|---|

| 39 | |

| 14 | |

| 12 | |

| 12 | |

| 10 |

| User | Count |

|---|---|

| 49 | |

| 35 | |

| 24 | |

| 21 | |

| 18 |