Join us in Barcelona for FabCon and SQLCon, September 28 - October 1, 2026.

This is best Fabric, Power BI, SQL and AI community event. How do we know? The last event sold out! Save €200 with code FABCMTY200.

Register now- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

A new Data Days event is coming soon! This time we’re going bigger than ever. Fabric, Power BI, SQL, AI and more. Don't miss out.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Transpose Head Banger

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Transpose Head Banger

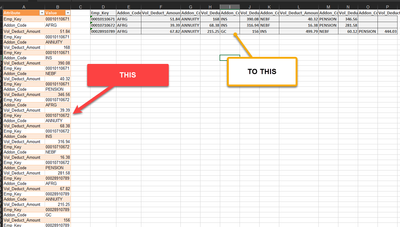

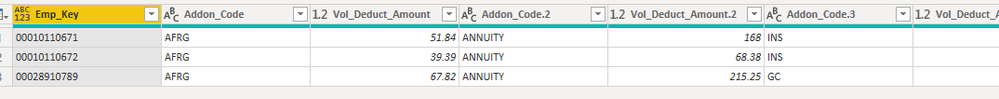

I've been at this for days so I'm hoping someone out there can help. Basically I'm trying to do the following

I've tried every combination of Pivot/Unpivot steps I can think of. Please help! Thank You.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jflores ,

Please try the code.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("ldJNa8MwDIDh/+JzEZK/Yh+zLi1h4I62G4wQclhyW5sd2sP+fUPPljNdLXgwetV1qrn8Dm/Tn9ooRCQkQl+R6jedqsdxvg7beZyWYb077p+vn/PP8DqN9+/bUF/m+/W2DB1BsM/pf7WUPtrzFwuSDxKuTSeWMhEBRVpqXnYsZxGMlmjvTTq1h8T/z3pwnhW1MIWJYKJIW0nhAxh+fRmwGIM8RP5SMloxxqLJ/rYWQwcClxF1iIRViMIYvoKQOZaCthJDkwPtJOJ+y28vd3a8VOpqY4Qqc3a8VuzqEUi0t7Wu1lpAo/r+AQ==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Attribute = _t, Value = _t]),

#"Added Index" = Table.AddIndexColumn(Source, "Index", 1, 1, Int64.Type),

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each Number.RoundDown([Index]/3.000009)),

#"Renamed Columns" = Table.RenameColumns(#"Added Custom",{{"Attribute", "Name"}}),

#"Grouped Rows" = Table.Group(#"Renamed Columns", {"Custom"}, {{"all", each Record.FromTable(_)}}),

#"Expanded all" = Table.ExpandRecordColumn(#"Grouped Rows", "all", {"Emp_Key", "Addon_Code", "Vol_Deduct_Amount"}, {"Emp_Key", "Addon_Code", "Vol_Deduct_Amount"}),

#"Grouped Rows1" = Table.Group(#"Expanded all", {"Emp_Key"}, {{"Combine", each List.Combine( List.Zip({[Addon_Code], [Vol_Deduct_Amount]})) }}),

#"Extracted Values" = Table.TransformColumns(#"Grouped Rows1", {"Combine", each Text.Combine(List.Transform(_, Text.From), ","), type text}),

#"Split Column by Delimiter" = Table.SplitColumn(#"Extracted Values", "Combine", Splitter.SplitTextByDelimiter(",", QuoteStyle.Csv), {"Addon_Code", "Vol_Deduct_Amount", "Addon_Code.2", "Vol_Deduct_Amount.2", "Addon_Code.3", "Vol_Deduct_Amount.3", "Addon_Code.4", "Vol_Deduct_Amount.4", "Addon_Code.5", "Vol_Deduct_Amount.5", "Addon_Code.6", "Vol_Deduct_Amount.6"}),

#"Changed Type" = Table.TransformColumnTypes(#"Split Column by Delimiter",{{"Addon_Code", type text}, {"Vol_Deduct_Amount", type number}, {"Addon_Code.2", type text}, {"Vol_Deduct_Amount.2", type number}, {"Addon_Code.3", type text}, {"Vol_Deduct_Amount.3", type number}, {"Addon_Code.4", type text}, {"Vol_Deduct_Amount.4", type number}, {"Addon_Code.5", type text}, {"Vol_Deduct_Amount.5", type number}, {"Addon_Code.6", type text}, {"Vol_Deduct_Amount.6", type number}})

in

#"Changed Type"

If the problem is still not resolved, please provide detailed error information or the expected result you expect. Let me know immediately, looking forward to your reply.

Best Regards,

Winniz

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jflores ,

Please try the code.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("ldJNa8MwDIDh/+JzEZK/Yh+zLi1h4I62G4wQclhyW5sd2sP+fUPPljNdLXgwetV1qrn8Dm/Tn9ooRCQkQl+R6jedqsdxvg7beZyWYb077p+vn/PP8DqN9+/bUF/m+/W2DB1BsM/pf7WUPtrzFwuSDxKuTSeWMhEBRVpqXnYsZxGMlmjvTTq1h8T/z3pwnhW1MIWJYKJIW0nhAxh+fRmwGIM8RP5SMloxxqLJ/rYWQwcClxF1iIRViMIYvoKQOZaCthJDkwPtJOJ+y28vd3a8VOpqY4Qqc3a8VuzqEUi0t7Wu1lpAo/r+AQ==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Attribute = _t, Value = _t]),

#"Added Index" = Table.AddIndexColumn(Source, "Index", 1, 1, Int64.Type),

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each Number.RoundDown([Index]/3.000009)),

#"Renamed Columns" = Table.RenameColumns(#"Added Custom",{{"Attribute", "Name"}}),

#"Grouped Rows" = Table.Group(#"Renamed Columns", {"Custom"}, {{"all", each Record.FromTable(_)}}),

#"Expanded all" = Table.ExpandRecordColumn(#"Grouped Rows", "all", {"Emp_Key", "Addon_Code", "Vol_Deduct_Amount"}, {"Emp_Key", "Addon_Code", "Vol_Deduct_Amount"}),

#"Grouped Rows1" = Table.Group(#"Expanded all", {"Emp_Key"}, {{"Combine", each List.Combine( List.Zip({[Addon_Code], [Vol_Deduct_Amount]})) }}),

#"Extracted Values" = Table.TransformColumns(#"Grouped Rows1", {"Combine", each Text.Combine(List.Transform(_, Text.From), ","), type text}),

#"Split Column by Delimiter" = Table.SplitColumn(#"Extracted Values", "Combine", Splitter.SplitTextByDelimiter(",", QuoteStyle.Csv), {"Addon_Code", "Vol_Deduct_Amount", "Addon_Code.2", "Vol_Deduct_Amount.2", "Addon_Code.3", "Vol_Deduct_Amount.3", "Addon_Code.4", "Vol_Deduct_Amount.4", "Addon_Code.5", "Vol_Deduct_Amount.5", "Addon_Code.6", "Vol_Deduct_Amount.6"}),

#"Changed Type" = Table.TransformColumnTypes(#"Split Column by Delimiter",{{"Addon_Code", type text}, {"Vol_Deduct_Amount", type number}, {"Addon_Code.2", type text}, {"Vol_Deduct_Amount.2", type number}, {"Addon_Code.3", type text}, {"Vol_Deduct_Amount.3", type number}, {"Addon_Code.4", type text}, {"Vol_Deduct_Amount.4", type number}, {"Addon_Code.5", type text}, {"Vol_Deduct_Amount.5", type number}, {"Addon_Code.6", type text}, {"Vol_Deduct_Amount.6", type number}})

in

#"Changed Type"

If the problem is still not resolved, please provide detailed error information or the expected result you expect. Let me know immediately, looking forward to your reply.

Best Regards,

Winniz

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you. Someone else assisted me.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please post sample data - Refer to this - How to provide sample data in the Power BI Forum - https://community.powerbi.com/t5/Community-Blog/How-to-provide-sample-data-in-the-Power-BI-Forum/ba-...

Ideal will be to upload the file without confidential/sensitive data to a cloud storage service such as Onedrive/Google Drive/Dropbox/Box (Onedrive preferred) and share the link here.

--------------------------------------------------------------------------------

👍 It's been a pleasure to help you.

Help Hours: 11 AM to 9 PM (UTC+05:30)

How to get your questions answered quickly -- How to provide sample data

--------------------------------------------------------------------------------

Helpful resources

Power BI Monthly Update - May 2026

Check out the May 2026 Power BI update to learn about new features.

Data Days 2026 coming soon!

Sign up to receive a private message when registration opens and key events begin.

New to Fabric Survey

If you have recently started exploring Fabric, we'd love to hear how it's going. Your feedback can help with product improvements.