Join us in Barcelona for FabCon and SQLCon, September 28 - October 1, 2026.

This is best Fabric, Power BI, SQL and AI community event. How do we know? The last event sold out! Save €200 with code FABCMTY200.

Register now- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

A new Data Days event is coming soon! This time we’re going bigger than ever. Fabric, Power BI, SQL, AI and more. Don't miss out.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Max Date Values

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Max Date Values

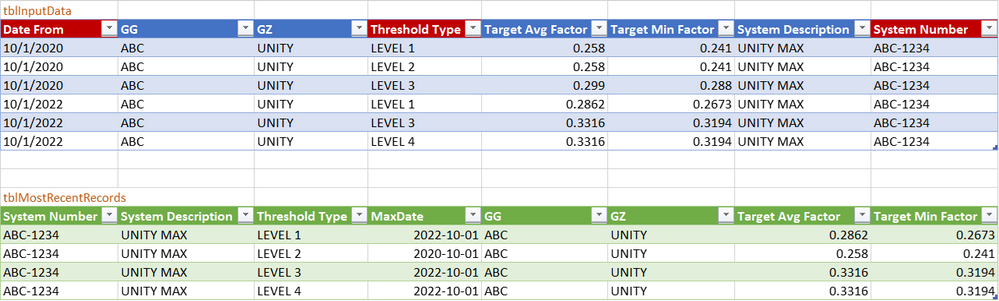

I am trying to access the records for the most current date on a very large table.

Below is a example of the data I am working with. The "System Number" and "Threshold Type" can have multiple "Date From" I need the most current.

New to Power BI , any help would be greatly appreciated

| Date From | GG | GZ | Threshold Type | Target Avg Factor | Target Min Factor | System Description | System Number |

| 2020-10-01 | ABC | UNITY | LEVEL 1 | 0.258 | 0.241 | UNITY MAX | ABC-1234 |

| 2020-10-01 | ABC | UNITY | LEVEL 2 | 0.258 | 0.241 | UNITY MAX | ABC-1234 |

| 2020-10-01 | ABC | UNITY | LEVEL 3 | 0.299 | 0.288 | UNITY MAX | ABC-1234 |

| 2022-10-01 | ABC | UNITY | LEVEL 1 | 0.2862 | 0.2673 | UNITY MAX | ABC-1234 |

| 2022-10-01 | ABC | UNITY | LEVEL 3 | 0.3316 | 0.3194 | UNITY MAX | ABC-1234 |

| 2022-10-01 | ABC | UNITY | LEVEL 4 | 0.3316 | 0.3194 | UNITY MAX | ABC-1234 |

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK, all you need to do is Group By System Number and Threshold Type, then filter each sub table in the Table.Group aggregation:

let

//change next line to reflect your actual data source

Source = Excel.CurrentWorkbook(){[Name="Table1"]}[Content],

#"Changed Type" = Table.TransformColumnTypes(Source,{

{"Date From", type date}, {"GG", type text}, {"GZ", type text}, {"Threshold Type", type text},

{"Target Avg Factor", type number}, {"Target Min Factor", type number},

{"System Description", type text}, {"System Number", type text}}),

#"Grouped Rows" = Table.Group(#"Changed Type", {"Threshold Type", "System Number"}, {

{"Latest", (t)=>Table.SelectRows(t, each [Date From] = List.Max(t[Date From])),

type table [Date From=nullable date, GG=nullable text, GZ=nullable text, Threshold Type=nullable text, Target Avg Factor=nullable number, Target Min Factor=nullable number, System Description=nullable text, System Number=nullable text]}}),

#"Expanded Latest" = Table.ExpandTableColumn(#"Grouped Rows", "Latest", {"Date From", "GG", "GZ", "Target Avg Factor", "Target Min Factor", "System Description"})

in

#"Expanded Latest"

Results from your data

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK, all you need to do is Group By System Number and Threshold Type, then filter each sub table in the Table.Group aggregation:

let

//change next line to reflect your actual data source

Source = Excel.CurrentWorkbook(){[Name="Table1"]}[Content],

#"Changed Type" = Table.TransformColumnTypes(Source,{

{"Date From", type date}, {"GG", type text}, {"GZ", type text}, {"Threshold Type", type text},

{"Target Avg Factor", type number}, {"Target Min Factor", type number},

{"System Description", type text}, {"System Number", type text}}),

#"Grouped Rows" = Table.Group(#"Changed Type", {"Threshold Type", "System Number"}, {

{"Latest", (t)=>Table.SelectRows(t, each [Date From] = List.Max(t[Date From])),

type table [Date From=nullable date, GG=nullable text, GZ=nullable text, Threshold Type=nullable text, Target Avg Factor=nullable number, Target Min Factor=nullable number, System Description=nullable text, System Number=nullable text]}}),

#"Expanded Latest" = Table.ExpandTableColumn(#"Grouped Rows", "Latest", {"Date From", "GG", "GZ", "Target Avg Factor", "Target Min Factor", "System Description"})

in

#"Expanded Latest"

Results from your data

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ronrsnfld

Elegant solution, thanks.

Open question, and something that I did not test yet, but in terms of query performance, would your approach be faster on large tables? Any insights would be appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It might be worthwhile testing various methods of solving his problem. I think that, on a large database, the efficiency of the sort would depend more on how large each subgroup is. A sort has to load the entire table and is recommended to be done as a "last step". I have assumed that a sort within a Table.Group aggregation will only load the "sub-table". But I don't know for sure. Worth testing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tested both approaches on a 100'000 record fact table, running each query twice.

My query, using the self-join approach ran in 0.033 ms and in 0.27 ms respectively, and your query, using a "group and filter sub-table" approach, ran in 27.49 ms and 10.92 ms respectively.

My big fact table of 100'000 records, consisted of columns "System ID", "Threshold Level", "Date", and "Value", where "System ID" and "Threshold Level" of each record was a random pick from the integer interval [1 .. 5], Date was a date between 2023-01-01 and 2023-01-01, and Value was a random number between 0 and 10.

code to generate the random table is given below just in case you want to test also:

/*

This query will generate a big table with columns

"System ID" = a dimension key where possible values are defined in ListSystems

"Threshold Level" = a dimension key where possible values are defined in ListThreshold,

"Date" = a date on or between pStartDate and pEndDate

"Value" = a random number between 0 and 10

The only input required is

* pStartDate = the earliest date that should appear in the Date column

* pEndDate = the latest date that should appear in the Date column

* pNbrOfTransactions = the desired number of transaction records in the final table

The way the big fact table is constructed:

Each dimension key pair (System ID, Threshold Level) will appear for all dates in the date range.

Hence, if we need X transactions in the final table, and we have Y dates,

then we need X/Y = Z dimension key pairs (System ID, Threshold Level)

So the steps to construct the final table are:

1. Calculate pNumberOfDays = the number of days between pStartDate and pEndDate

2. Calculate the required number of Dimension Keys: pNumberOfDimensionKeys = pNumberOfTransactions / pNumberOfDays

3. Create a table with "System ID" and "Threshold Level" as columns, having pNumberOfDimensionKeys records

4. For each record in that table, add the list of all dates {pStartDate .. pEndDate}

5. Expand the list as new rows

6. Add a column "Value" and fill with a random number between 0 and 10

*/

let

//define the starting parameters

pStartDate = #date(2023,1,1),

pEndDate = #date(2023,2,1),

pNbrOfTransactions = 100000, //the desired number of rows in the final table

//define the list of valid keys for System ID and for Threshold Level

ListSystem = {1 .. 5},

ListThreshold = {1 .. 5},

//all required input data are known, so start the generation process

//first calculate some counters

pNumberOfDays = Duration.Days(pEndDate-pStartDate) + 1,

pSystemCount = List.Count(ListSystem),

pThresholdCount = List.Count(ListThreshold),

pNumberOfDimensionKeys = Number.RoundUp(pNbrOfTransactions / pNumberOfDays),

//generate a list of System keys randomly picked from ListSystem

ShuffledListSystems = List.Generate(

() => 1, each _ <= pNumberOfDimensionKeys, each _ + 1,

each ListSystem{Number.RoundDown(Number.RandomBetween(0, pSystemCount))}

),

//generate a list of Threshold keys randomly picked from ListThreshold

ShuffledListThresholds = List.Generate(

() => 1, each _ <= pNumberOfDimensionKeys, each _ + 1,

each ListThreshold{Number.RoundDown(Number.RandomBetween(0, pThresholdCount))}

),

//create a table by adding ShuffledListThresholds and ShuffledListSystems together as columns

Table1 = Table.FromColumns(

{ShuffledListSystems, ShuffledListThresholds},

type table[System ID = text, Threshold Level = text]

),

//for each record in Table1, add all dates as a list

TableWithDates = Table.AddColumn(Table1, "Date", each List.Dates(pStartDate, pNumberOfDays, #duration(1,0,0,0))),

#"Expanded Custom" = Table.ExpandListColumn(TableWithDates, "Date"),

#"Changed Type" = Table.TransformColumnTypes(#"Expanded Custom",{{"Date", type date}}),

#"Added Custom" = Table.AddColumn(#"Changed Type", "Value", each Number.RandomBetween(0,10)),

#"Changed Type1" = Table.TransformColumnTypes(#"Added Custom",{{"Value", type number}})

in

#"Changed Type1"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nice work. I will try to duplicate it using your generated table if I have time this weekend.

Obviously your method seems to be an order of magnitude faster than mine. Would probably make a huge difference on a large database, if that difference scales (which I suspect it would).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

to rephrase the question asked by @ronrsnfld :

What is the business key of each record?

In other words, which columns together uniquely define the record?

Can you be more specific about which record you expect to keep in the sample data that you have provided?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The fields to uniquely identify a record are: (System Number, Threshold Type, Date From).

I need to capture the ones with the most current Date From.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In your sample data, there are still two different records for (System Number = ABC-1234) and (Threshold Type = Level 1) and (Date = 2022-10-01).

Please explicitly define which records from your sample data you wish to see returned by the query. Do you want to keep both those records, or only one of them? If only one, then which one?

So far, I feel that we lack some information to understand what you are looking for. Please define your expected solution well - e.g., "in this sample table with X rows, I expect rows A, C, and F to be returned." (where X, A, C, and F are numbers)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

| Date From | GG | GZ | Threshold Type | Target Avg Factor | Target Min Factor | System Description | System Number |

| 10/1/2020 | ABC | UNITY | LEVEL 1 | 0.258 | 0.241 | UNITY MAX | ABC-1234 |

| 10/1/2020 | ABC | UNITY | LEVEL 2 | 0.258 | 0.241 | UNITY MAX | ABC-1234 |

| 10/1/2020 | ABC | UNITY | LEVEL 3 | 0.299 | 0.288 | UNITY MAX | ABC-1234 |

| 10/1/2022 | ABC | UNITY | LEVEL 1 | 0.2862 | 0.2673 | UNITY MAX | ABC-1234 |

| 10/1/2022 | ABC | UNITY | LEVEL 3 | 0.3316 | 0.3194 | UNITY MAX | ABC-1234 |

| 10/1/2022 | ABC | UNITY | LEVEL 4 | 0.3316 | 0.3194 | UNITY MAX | ABC-1234 |

| The 3 fields in yellow create a unique record | |||||||

| Based on these unique records I need to keep the System Number and Threshold Type with the most current date. | |||||||

| In the example attached I would need to keep the ones in green. | |||||||

Notice not all Threshold Types have a record with 10/1/2022 Date From. In these cases the most current date is 10/1/2020 (see above in blue) I need to see these records

Does this help? |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi @Gryph87

Thanks for providing more details. Based on that, here is my proposed solution.

First, this is an overview of the input data and the result I have obtained:

I based myself on the principle of an inner join. First I created a table with system number, threshold level, and the max(Date From) for that combination. This table, which I call 'MostRecentKeys', can then be joined with an inner join on the original table. The result of this join will be that only the records from both tables are kept where the business keys are identical. This is exactly what you want.

In more detail:

1. I created the table and used "from table/range" in Excel to obtain a Power Query

2. I used the "Group by" function with aggregations on "System number" and "Threshold Type", with a max on column "Date From" to obtain a table with 3 columns. Using the Advanced Editor or the rename functionality, I call this step 'MostRecentKeys'

3. Then I used the self-join functionality to join the query on itself. In other words, I pressed the 'Merge Queries' button and joined the query with itself with an inner join type, using the three business columns at the same time in both sides of the join

4. In the code obtained in step 3, I used the Advanced Editor to manually change the joined queries to 'MostRecentKeys' and '#"Changed Type" respectively, and I ensured that the three listed keys of each had correct column names.

5. Then I expanded the merged query column to reveal all necessary data

6. To finish off, I reordered the columns

Power Query Code

The Power Query code is as follows:

let

Source = Excel.CurrentWorkbook(){[Name="tblInputData"]}[Content],

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Date From", type date}, {"GG", type text}, {"GZ", type text}, {"Threshold Type", type text}, {"Target Avg Factor", type number}, {"Target Min Factor", type number}, {"System Description", type text}, {"System Number", type text}}),

MostRecentKeys = Table.Group(#"Changed Type", {"System Number", "Threshold Type"}, {{"MaxDate", each List.Max([Date From]), type nullable date}}),

#"Merged Queries" = Table.NestedJoin(MostRecentKeys, {"System Number", "Threshold Type", "MaxDate"}, #"Changed Type", {"System Number", "Threshold Type", "Date From"}, "AdditionalData", JoinKind.Inner),

#"Expanded AdditionalData" = Table.ExpandTableColumn(#"Merged Queries", "AdditionalData", {"GG", "GZ", "Target Avg Factor", "Target Min Factor", "System Description"}, {"GG", "GZ", "Target Avg Factor", "Target Min Factor", "System Description"}),

#"Reordered Columns" = Table.ReorderColumns(#"Expanded AdditionalData",{"System Number", "System Description", "Threshold Type", "MaxDate", "GG", "GZ", "Target Avg Factor", "Target Min Factor"})

in

#"Reordered Columns"

If you like this approach, please mark this post as solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Since all the dates are the same, when you have multiple entries on the same date, how do you determine which is most recent?

Helpful resources

Power BI Monthly Update - May 2026

Check out the May 2026 Power BI update to learn about new features.

Data Days 2026 coming soon!

Sign up to receive a private message when registration opens and key events begin.

New to Fabric Survey

If you have recently started exploring Fabric, we'd love to hear how it's going. Your feedback can help with product improvements.