FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

View all the Fabric Data Days sessions on demand. View schedule

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Re: [Unable to combine data] accessing data sourc...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

[Unable to combine data] accessing data sources that have privacy levels

Hi! All,

I have no idea how to solve this refresh problem on PowerBI service. it was working fine in desktop but refresh is not working after I publish it. below is the error messgae

Unable to combine data] Section1/issueTimeSpentByRole/AutoRemovedColumns1 is accessing data sources that have privacy levels which cannot be used together. Please rebuild this data combination.

The table is different each time. Anyone has recommenadtion?

Gen

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @gennanchen

I'll post our Guide "Schedule a refresh on Power BI Service" https://aserve.atlassian.net/wiki/spaces/PBCFJC/pages/1333788685/Schedule+a+refresh+on+Power+BI+Serv... I hope this can be helpful for someone else.

Best regards,

Anton

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi there

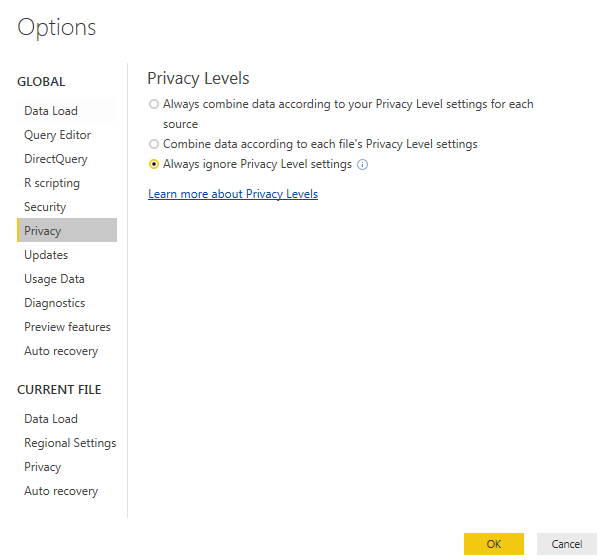

If you go back into your Power BI Desktop, I would suggest going into the settings, then click on Privacy and changing the Privacy settings to "Always ignore Privacy Level settings"

This should solve the issue

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@GilbertQ I just modified the setting in desktop and republish it. But it did not seems working also. Still run into the same error for different table. any recommendation for next?

Gen

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes. I can confirm the privacy level is None for all my datasource since I only have 1 URL for my RESAP API call.

Gen

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No luck on this. I must say the refresh setting for PowerBi is really drving me nuts. seems not working at all.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am using techniques in below page

https://www.mattmasson.com/2014/11/iterating-over-multiple-pages-of-web-data-using-power-query/

to loop through a list of parameters from the same service calls. It seems this will run into the problem below

https://community.powerbi.com/t5/Service/Refresh-error-when-combining-multiple-Web-queries/m-p/67897

But I am not so sure. Will be great if anyone can confirm.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @gennanchen,

If your issue is related to combine multiple datasource, I'd like to suggest split them to multiple queries, then create a new blank query to invoke above source with query names.

Steps:

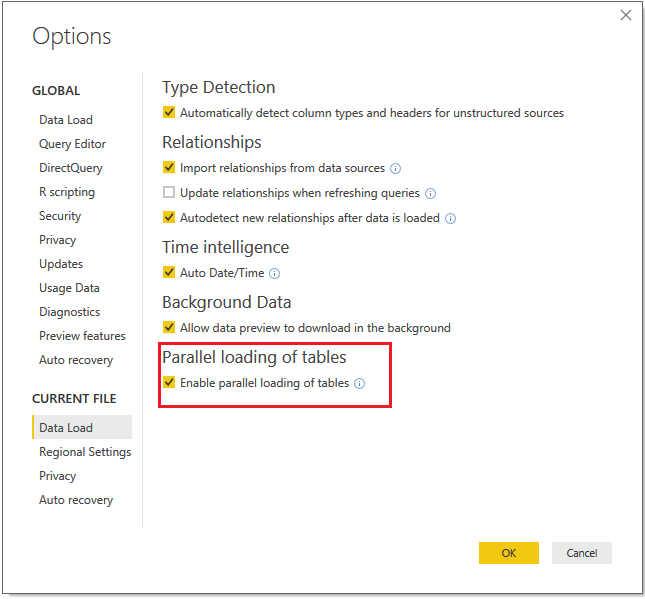

1. Turn on the "parallel loading of tables" feature.

1. Create new blank queries to store the child datasources which in original datasource.( keep them before the merged query)

2. Modify the original query to invoke function with above query name.

Regards,

Xiaoxin Sheng

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am having the same issue, but I believe mine is specific to an ID that's required for the path of my API call; I don't know what you're data sources look like, but it seems like editing the query works fine if done right. I have split up my functions into one that requests a resource from the context of my user for that API (www.domain.com/user/[my id]/resources?) and one that uses an ID from the first to get a more thorough response (www.domain.com/resource/[resourceID]?). The first response only contains a summary of the info I need and is paged, so the function is slightly more ellaborate to iterate over multiple pages and extract only the ID I need for another endpoint. The second function is invoked from the context of a custom column, passing in the ID column as a parameter.

When I first implemented this, my second function had the parameter as part of the baseurl in my Web.Contents() function. This caused an error with refreshing because Power BI online wants to validate credentials for every datasource. In my case, that's potentially n datasources if it considers each resourceID to be another source. I then moved the parameter to the optional RelativePath attribute on my Web.Contents() function:

Web.Contents(

"www.domain.com",

[RelativePath = resourceID,

Query = [...]

]

)

Now I get the same error that this post describes. From a great deal of searching, it seems like Power BI online is doing something extra and/or differently when it comes to datasources and refreshing them that is preventing this from being possible. Can anyone confirm that or solve the problem based on the context I've given?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi everyone!

@mithrandir, I'm having the same problem as you after modify the JIRA content pack to get information of every Issue.

Have you find any answer?

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous@Anonymous@GilbertQ

Sorry to reply to this so late. I am consuming several RESTful API's, so this ended up being a blocking issue for me. I spoke with Power BI support about the issue and there a few scenarios.

The first is "https://www.domain.com/rest/api" or similar. If you try authenticating against this URL alone, you'll usually find yourself unsuccessful or redirected. The way the Power BI service online was explained to me, is that it tries to run through and authenticate the endpoints in your dataset before allowing/attempting a scheduled refresh. In hindsight, this makes sense because your desktop application can implicitly be trusted by being your physical property, where the Power BI service on Microsoft's servers needs an extra step to verify access. Doing authentication this way also avoids tying up resources that are going fail anyway.

The second is "https://www.domain.com" with a parameter passed and appended to the baseurl. This fails for the same reason, but the difference is the Power BI service is set up to inform you that your datasource doesn't support scheduled refresh before it ever attemtps to authenticate against the endpoint. This is to avoid you putting in any arbitrary parameter that could potentially cause issues, therefore tying up resources. It would also take some work to "build" the URL's, if you will, in order to authenticate them ahead of time and support parameterized baseurls.

The last scenario relevant to this topic is "https://www.domain.com" and the use of the RelativePath property defined with a variable in the options list parameter. If you've tried this, you know that your dataset in the Power BI service will have no indication of an issue until it tries to refresh. It fails for the same reasons above, but Power BI doesn't have a check built in like it does when appending a parameter to the baseurl. For those who mentioned using JIRA data, I know that API key auth support is coming down the pipe on their end sometime soon and the current content pack implementation (https://www.domain.com/jira/rest/api/2/search) works with scheduled refresh today. If you are trying to access different resource perspectives, one of the three above scenarios should describe any issues you are having (I'm consuming the remotelinks endpoint which blocks scheduled refresh for me)

At the time, support told me that supporting scheduled refreshing for parameterized Web datasources was not on their roadmap given the large changes they have made this year and are continuing to make concerning Power BI. That led me to look into setting up a gateway, but I don't believe the scheduled refresh there differs from the Power BI service concerning the Web datasource (please correct me if I'm wrong). My current solution is refreshing my reports manually as often as I need which is not really a workaround, but I wanted to share my experience so far just in case.

One suggestion that seemed promising was to store the report in OneDrive for Business locally for automatic syncing from desktop, but the only repo to interact with Power BI desktop is using a UI testing framework and hasn't been updated in awhile. I was hoping to find a useful set of cmdlets for Powershell to interact with Power BI desktop or some other way to refresh a report automatically to make this a good workaround, but haven't found anything so far. The last option that @GilbertQ mentioned is creating a custom connector for the datasource you are working with. This currently does not support OAuth and I've heard a handful of other scenarios where consuming an API that you don't own may not be supported. I'm dealing with about a 50/50 split as far as the API's I'm consuming, so I decided not to commit considering I wouldn't be gaining scheduled refresh anyway.

Hopefully this is helpful. I am continuously investigating solutions to this problem, so I wll try to update this thread if I find any other potential routes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have to chime in here and just say that the refreshability of PowerBI has to be the most disappointing feature I've experienced. I understand there are alot of moving parts here and managing resources etc but it's never been stable since release.

If I am using LiveQuery or doing things on one database alone (ie SQL Server) then things go ok. But as soon as I try multiple sources (ie Salesforce, SQL Server and a PostgreSQL Ruby on Rails app) things get very unstable.

One day it works, the next day it doesn't. Error messages are very vague and little support to get something that appears to work OOB actually working as it is intended. I also find solutions like disabling privacy settings to be concerning. I don't understand the innards of this enough to warrant disabling security features.

Putting aside an hour a day to refresh and republish reports is not suitable yet this kind of functionality is what everyday users are expecting.

I can't recommend PowerBI if scheduled reports are a necessity.

My next step to resolve this is to set daily jobs to copy ALL third party databases locally to our SQL Server databases on so I can run a refresh via one channel.

I'm looking forward to this situation improving though.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have been using On-Premise data sources and I have not had any errors with the Gateways at all really. It has always almost been something from the source systems.

I have not done extensive work with Salesforce, but connectors like OneDrive and SharePoint I have not had any issues with the refreshing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cheers for the reply.

I'll keep digging in and trying to find out why these errors are happening and see if I can provide any insight.

It is just odd that for something designed to mashup data between different sources that it would fail so consistently. I'm probably pushing the limits on something but I don't feel what I am doing should be out of the ordinary.

I do find though that the Salesforce connector does tend to return alot of Salesforce database errors (ie invalid query locators, too many cursors in the database etc etc).

It seems that nothing beats a good ole' "extract the data you need from all the databases, store it in your own database, query everything from one database". Even if you look at Salesforce's Heroku Connect it seems that is all they are doing aswell. Seems Salesforce really doesn't want you to hit their API despite their CEO and Microsoft CEO shaking hands on stage at Dreamforce 😛

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi!

@mithrandir@Anonymoushave you managed to find a solution to this? I'm struggling with similar issues.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What if you had to turn off the privacy settings and then see if that works successfully?

After which you can look at this blog post by Chris Webb for the different privacy settings and how they work. It is a 5 part blog post

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As my last resort I changed my URL in Data Source setting of the On-Premises Gateway Settings from https://www.url.com/ to https://www.url.com and changed all the privacy levels within the file and in the On-Premises Gateway Settings to Public instead of None. And suddenly my queries started to refresh! I'm not sure which one did the trick, but I'm glad that it's working now.

At first I tried to do this query by using a function dedicated to opening the URLs. But naturally the function approach failed in the cloud environment. Now my query goes through a list of IDs by using a RelativePath variable in the options parameter of the Web.Contents() -function with fixed string as the url parameter. The URL is in the form of the "https://www.url.com/api/rest/" which is different from the one I entered to the gateway settings. I was almost certain that the reason that it basically uses different URLs ("https://www.url.com/api/rest/ID1", "https://www.url.com/api/rest/ID2" etc.) in a single query was the reason for the gateway to fail at refreshing the data. But it seems like that this wasn't the case.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous,

I´m trying to connect to the API of JIRA so I don't need some kind of Gateway.

But in your explanation you said that you connect to urls like this: https://www.url.com/api/rest/ID1

Looks like and api, isn't it? How you do this?

Helpful resources

Power BI Monthly Update - November 2025

Check out the November 2025 Power BI update to learn about new features.

Fabric Data Days

Advance your Data & AI career with 50 days of live learning, contests, hands-on challenges, study groups & certifications and more!