Become a Certified Power BI Data Analyst!

Join us for an expert-led overview of the tools and concepts you'll need to pass exam PL-300. The first session starts on June 11th. See you there!

Get registered- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Webinars and Video Gallery

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- Quick Measures Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- R Script Showcase

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Power BI is turning 10! Let’s celebrate together with dataviz contests, interactive sessions, and giveaways. Register now.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Data Exceeded Limit

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Data Exceeded Limit

I set up auto refresh on a report I recently worked on, but the next day received this error:

"The amount of data on the gateway client has exceeded the limit for a single table. Please consider reducing the use of highly repetitive strings values through normalized keys, removing unused columns, or upgrading to Power BI Premium.

Activity ID: dada54c8-f920-493b-8710-cc2ce111bc68

Request ID: a079ea3a-458d-3234-345b-5b79823f844a

Time: 2022-01-24 21:23:51Z

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @BITSMH ,

You should check this as following.

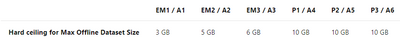

Firstly, about whether the premium is set correctly. There are various sku premiums and they have different limits on the size of the dataset .

Secondly, make sure that the workload behavior of premium capacity is configured correctly in the admin portal.

service admin premium workloads

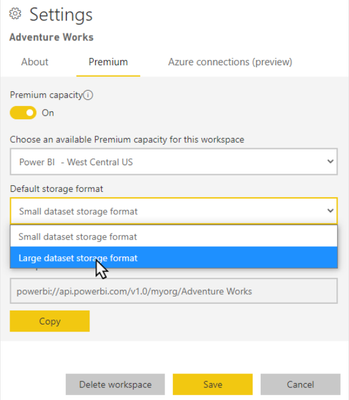

Thirdly, enable large dataset in power bi permium. Large datasets in the service do not affect the Power BI Desktop model upload size, which is still limited to 10 GB. Instead, datasets can grow beyond that limit in the service on refresh.

Fourth, as the error message says, reduce the use of highly constant, long string values and instead use a normalized key. Add a primary key and move most of the calculations to DAX to limit datasets size, or refer to the method described in this article (How to Minimize Data Load Size for Tables in Power BI ).

Best Regards

Community Support Team _ chenwu zhu

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @BITSMH ,

You should check this as following.

Firstly, about whether the premium is set correctly. There are various sku premiums and they have different limits on the size of the dataset .

Secondly, make sure that the workload behavior of premium capacity is configured correctly in the admin portal.

service admin premium workloads

Thirdly, enable large dataset in power bi permium. Large datasets in the service do not affect the Power BI Desktop model upload size, which is still limited to 10 GB. Instead, datasets can grow beyond that limit in the service on refresh.

Fourth, as the error message says, reduce the use of highly constant, long string values and instead use a normalized key. Add a primary key and move most of the calculations to DAX to limit datasets size, or refer to the method described in this article (How to Minimize Data Load Size for Tables in Power BI ).

Best Regards

Community Support Team _ chenwu zhu

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

try running your data model by dax studio or tabular editor where you can run best practice check on your dataset and run performance test to have a better understanding of where its lacking the performance and failing

Did I answer your question? Mark my post as a solution! / Did it help? Give some Kudos!

Proud to be a Super User!

Helpful resources

| User | Count |

|---|---|

| 46 | |

| 32 | |

| 30 | |

| 27 | |

| 25 |

| User | Count |

|---|---|

| 55 | |

| 55 | |

| 35 | |

| 33 | |

| 28 |