Join the Fabric User Panel to shape the future of Fabric.

Share feedback directly with Fabric product managers, participate in targeted research studies and influence the Fabric roadmap.

Sign up now- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Get Fabric certified for FREE! Don't miss your chance! Learn more

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Re: Analyze in Excel Memory Error

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Analyze in Excel Memory Error

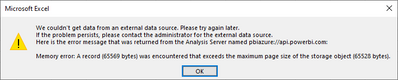

I have a Power BI dataset in the Power BI service and when I try to use Analyze in Excel against the model I receive the error "Memory error: A record (65569 bytes) was encountered that exceeds the maximum page size of the storage object (65528 bytes)."

I understand that this error is about the size of data in a row being larger than the maximum size allowed in a tabular data model. But, I do not receive this error when I use Power BI desktop to connect to the dataset in the Power BI service which should be using the same API and have the same Analysis Services limitations as using analyze in Excel. Also, this error is thrown when I try to use fields/measures from any tables in the model.

- Has anyone run into this error before?

- Does anyone know how the size of a row in Power BI is calculated or if it can be viewed in Power BI desktop somehow?

- Is the size of a row just the size in that table or is it the combined size of all rows that have a relationship to that table?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

//same issue also exists on stackoverflow (https://stackoverflow.com/questions/63155367/how-to-solve-excel-memory-error-a-record-that-exceeds-t...)

It would be great if there where a possibility to have the size limit increased or lifted!

We encountered the same issue with a PowerBI dataset which is continuously growing as we include more tables (and therefore columns) to the model. The error seems to be specific to excel and not limited to PowerBI. Apparently the size limitation is on a row / record level where a single record exceeds in the case of excel 65528 bytes. Most likely there might be a similar limitation in other tools: https://www.ibm.com/support/pages/record-size-exceeds-internal-limit-65535-bytes

As seen in the IBM help article a potential solution might be to check if there are any columns with particularly long text fields.

I already tried to narrow down the columns which might cause the issue by extracting the table sizes using DAX studio, however, did not find a particular culprit as the size of the columns caused by the number of lines/records does not relate to the size limitation on one record causing the issue in excel. As reference, the largest column in our dataset is the date field in the date table, which according to DAX studio is 10028.046875 KB large, but removing it only decreases the size of the record issue in excel by 40 byte from 92929 byte to 92889 byte.

In case you want to check the same on your dataset (link was already posted by vanessafvg in this tread):

https://exceleratorbi.com.au/table-size-from-power-bi-desktop/

Hence in our case large record size is likely not caused by one particular column but the extensive amount of columns.

As found out with trial and error, the size limitation is affected by all tables available in the dataset even if some might be not connected via relationships.

Interim solution will be to use a subset/perspective of the dataset for excel analysis purposes.

Overall steps to narrow down a solution in your case:

- check for large columns and potentially remove them

- keep your dataset as lean as possible, remove unnecessary / unneeded columns

- work with part of the dataset for excel analysis purposes which only includes the data needed in excel

-> all of these steps will help you to decrease your overall record size until you are below the size limit

Appreciate if someone found and could share a better solution to this issue which does not require the dataset to be reduced in size. This is the best solution I am so far able to come up with.

Related posts to this issue:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi there, i had the same issue:

Re: Refresh error / Exceed maximum page size of th... - Microsoft Fabric Community

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

https://exceleratorbi.com.au/table-size-from-power-bi-desktop/

https://www.sqlbi.com/tools/dax-studio/

If I took the time to answer your question and I came up with a solution, please mark my post as a solution and /or give kudos freely for the effort 🙂 Thank you!

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the link, I tried out DAX Studio and I am able to pull column size but I need to figure out row size which I do not see an option for when I look through the DMVs. Do you know if there is a DMV that I am missing that would show row size for a specific table?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not sure if you have seen this link, maybe there are some tips in there for you?

https://powerbi.tips/2020/07/analyze-in-excel-the-advanced-method/

If I took the time to answer your question and I came up with a solution, please mark my post as a solution and /or give kudos freely for the effort 🙂 Thank you!

Proud to be a Super User!

Helpful resources

Join our Community Sticker Challenge 2026

If you love stickers, then you will definitely want to check out our Community Sticker Challenge!

Power BI Monthly Update - January 2026

Check out the January 2026 Power BI update to learn about new features.

| User | Count |

|---|---|

| 19 | |

| 10 | |

| 8 | |

| 7 | |

| 7 |

| User | Count |

|---|---|

| 51 | |

| 43 | |

| 28 | |

| 27 | |

| 25 |