A new Data Days event is coming soon!

This time we’re going bigger than ever. Fabric, Power BI, SQL, AI and more. We're covering it all. You won't want to miss it.

Learn more- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Level up your Power BI skills this month - build one visual each week and tell better stories with data! Get started

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Parsing JSON Using a Schema

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Parsing JSON Using a Schema

I have a table in an SQL database that holds the records of submitted forms. The form fields aren't held in columns in the database, but instead are stored in a JSON string in a column. To get the data that's been submitted into columns for Power BI, I can use the Parse JSON function in Power Query on the JSON column and get the records and expand them out into columns.

The trouble I run into is I am relying on Power Query to identify the column names from the JSON, and this doesn't always work because not all fields are filled out all the time, so some get missed by Power Query, e.g. when a new form is submitted with a field that wasn't previously completed, this gets missed by Power Query as it's not listed in the query.

The SQL database also holds the schema for the JSON and I was hoping to use this to define the fields but I couldn't find any examples online how to do this? Is there an easy way to do this?

One way I have found to do this is to use one of the functions in the database called "parseJSON". Applying this to the schema column, I can create a list of all the fields in the schema and then use this query in the original query on the forms data in place of the column names when expanding the columns of the parsed JSON. This works but I was wondering if I am missing something where there is a better way to do what I'm trying to achieve?

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You will probably need to have a version that you can make minor modifications to for each schema. You can create a master schema map with an alignment to the required transformations.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jennratten Thanks for sharing this pbix. I've had a look through but can't see where you are using a JSON schema to parse a JSON string into columns. Not sure if that's because I don't have credentials for some of the datasources.

In my example I have a table with column "Schema", this holds the strings of the JSON schemas for all the forms that are used. A simple example of a string is like this:

[

{

"type": "Date",

"icon": "calendar",

"colour": "#84aced",

"label": "",

"description": "",

"readOnly": false,

"required": false,

"placeholder": "",

"hideExpression": "",

"settings": {

"minDate": "",

"maxDate": ""

},

"id": "Date1",

"valid": true

},

{

"type": "Location",

"icon": "street-view",

"colour": "#fca52a",

"label": "",

"description": "",

"readOnly": false,

"required": false,

"placeholder": "",

"hideExpression": "",

"settings": {

"options": ""

},

"id": "Location1",

"valid": true

}

]

In another table, I have the records of all the forms submitted, and the values submitted are represented as a JSON string following the schema of the form used and are stored in a column. The results string could look like this for the schema above:

{

"Date1": "2019-01-23T00:00:00+00:00"

}

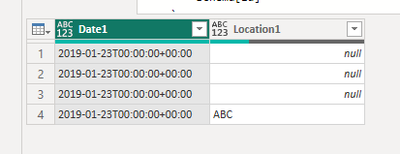

Notice how it doesn't contain the Location, as that's not a mandatory field in the form. So if I used PQs Parse JSON function, it would only parse the "Date1" field, and if a new form got submitted with the "Location1" field, the query wouldn't catch it when the dataset is refreshed in the service.

I'm looking for a way to extract all the fields from the schema (they all have the 'id' tag) and use them as column names when parsing the JSON results. Hope that makes sense!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello again - Yes, this makes sense. Thanks for providing some sample data. Please add the script below as a new blank query. You can grab the list of fields expected from the schema and the use it to expand the records returned in the forms json like this.

SCRIPT

let

// JSON Schema

Source =

{

[

type = "Date",

settings = [

minDate = "",

maxDate = ""

],

id = "Date1",

valid = true

],

[

type = "Location",

settings = [

options = ""

],

id = "Location1",

valid = true

]

},

#"Converted to Table" = Table.FromList(Source, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

#"Expanded Column1" = Table.ExpandRecordColumn(#"Converted to Table", "Column1", {"type", "icon", "colour", "label", "description", "readOnly", "required", "placeholder", "hideExpression", "settings", "id", "valid"}, {"type", "icon", "colour", "label", "description", "readOnly", "required", "placeholder", "hideExpression", "settings", "id", "valid"}),

#"Expanded settings" = Table.ExpandRecordColumn(#"Expanded Column1", "settings", {"minDate", "maxDate", "options"}, {"settings.minDate", "settings.maxDate", "settings.options"}),

Schema = Table.TransformColumnTypes(#"Expanded settings",{{"type", type text}, {"icon", type text}, {"colour", type text}, {"label", type any}, {"description", type any}, {"readOnly", type logical}, {"required", type logical}, {"placeholder", type any}, {"hideExpression", type any}, {"settings.minDate", type any}, {"settings.maxDate", type any}, {"settings.options", type any}, {"id", type text}, {"valid", type logical}}),

// Sample Data

Data =

{

[

Date1 = "2019-01-23T00:00:00+00:00"

],

[

Date1 = "2019-01-23T00:00:00+00:00"

],

[

Date1 = "2019-01-23T00:00:00+00:00"

],

[

Date1 = "2019-01-23T00:00:00+00:00",

Location1 = "ABC"

]

},

#"Converted to Table1" = Table.FromList(Data, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

ExpandRecords = Table.ExpandRecordColumn (

#"Converted to Table1",

"Column1",

// List of fields to expand

Schema[id]

)

in

ExpandRecordsRESULT

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @jennratten . This looks like a good solution for my example. The trouble I have is, in the real database, there are hundreds of different form templates, all with different levels of nesting and different field names. So I won't be able to type those all out in a query. I think given the "id" field is consistent across all form templates, that should make it easier. But what i'm not sure about is how to handle all the different nesting.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You will probably need to have a version that you can make minor modifications to for each schema. You can create a master schema map with an alignment to the required transformations.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Helpful resources

Power BI Monthly Update - April 2026

Check out the April 2026 Power BI update to learn about new features.

Data Days 2026 coming soon!

Sign up to receive a private message when registration opens and key events begin.

New to Fabric Survey

If you have recently started exploring Fabric, we'd love to hear how it's going. Your feedback can help with product improvements.