Join us in Barcelona for FabCon and SQLCon, September 28 - October 1, 2026.

This is best Fabric, Power BI, SQL and AI community event. How do we know? The last event sold out! Save €200 with code FABCMTY200.

Register now- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

A new Data Days event is coming soon! This time we’re going bigger than ever. Fabric, Power BI, SQL, AI and more. Don't miss out.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: How to Bypass Salesforce 2000 Rows Limitation

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to Bypass Salesforce 2000 Rows Limitation

Hey guys,

A few weeks ago I had a problem with the 2000 rows limitation while importing data from Salesforce to Power BI. I did a little research and found out that this was a problem with the Salesforce API, Microsoft couldn't do anything to fix it and a lot of people were struggling with that issue as well.

However, I finally found an easy and quick way to bypass this problem and since this community helped me a lot when I started working with Power BI, I feel that now is my time to give it back. Now we don't have to manually download the report from Salesforce and replace an Excel file or create a lot of reports to split the data in pieces of 2000 rows.

You will import data from Salesforce reports to a Google Sheets and then import data from this spreadsheet to your Power BI file.

- Open a sheet in Google Sheets.

- Go to Add-Ons > Get Add-Ons.

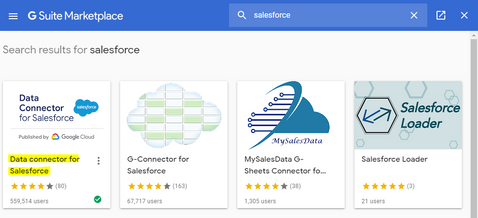

- Search for 'Salesforce' and install the 'Data Connector for Salesforce'.

- Login to your Salesforce account, allow and authorize the permissions Google Sheets needs to access the data.

- Go to Add-ons > Data Connector for Salesforce > Open.

- You will find this menu:

- Now you can import data from all your reports with no limitation clicking on "Reports".

- After that you can click on "Refresh" and schedule auto data refreshes every 4, 8 or 24 hours.

- Open your Power BI, Get Data > From Web

- Get a shareable link of your Google Sheet (with permission to view at least) and paste it.

- Modify your Google Sheet link from:

https://docs.google.com/spreadsheets/d/google-sheet-example/edit?usp=sharing

To:

https://docs.google.com/spreadsheets/d/google-sheet-example/export?format=xlsx&id=google-sheet-example

Now it's done. You can start working with your Salesforce data without the 2000 rows limitation and with auto refreshes.

Hope it helps!

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @fernandohiltner ,

That's pretty cool! Thanks for sharing that!

If this post helps, then please consider Accept it as the solution to help the others find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am able to connect, However the all the columns I have in salesforce report is not being retrieved across.

Is there anything I could do ?

Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can upvote the request to increase that 2000-row limitation. The more votes, the more likely it will be changed. Here is that link. https://ideas.salesforce.com/s/idea/a0B8W00000GdYvXUAV/power-bi-communicate-with-microsoft-developme...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't think this is working anymore. The connector will also download only 2000 records into the gsheet.

Then it will tell you to re-auth to get "all data" but it just does not work. You can be revoking in SF and authorizing here in G Sheet over and over this message will just keep popping out and of course, the connector continues to download only 2000 records:

(Not to mention the message is outdated too - you need to revoke the permissions in your personal settings in SF).

And of course, no way to contact Google about this (maker of the add-on).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Just want to say thank you for this neat workaround, awesome stuff!👍

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My Salesforce object has 137 columns, when trying to extract it gives the following error: Error: Limit Exceeded: URL Length of URLFetch.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

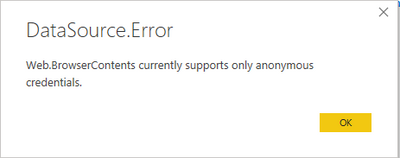

Does this method allow authentication using username/password or another method or it is only via unsecured web connector (Anyone with the link can access the data)?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fernando,

Thanks for the solution here, is was fantastic.

But unfortunately I still have an issue to solve, when I am using google sheets and as my report is quite long, the Salesforce connector shows un error "Exceeded maximum execution time", and because of that I can't import the report. I have searched about this error and everyone says the limits is 5 min running, but I don't have a solution for that. Could you please help me on it?

Thanks,

MatheusFF

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Matheus!

@Anonymous

Firstly, thank you for your feedback.

Regarding your problem, I have never faced this issue before. I guess this must be a limitation within Google Sheets for running scripts. I guess I would split the report into smaller parts (to run the request in less than 5 minutes) and then aggregate it all inside Power BI. Is this a viable option for you?

Hope it helps!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @fernandohiltner ,

That's pretty cool! Thanks for sharing that!

If this post helps, then please consider Accept it as the solution to help the others find it more quickly.

Helpful resources

Power BI Monthly Update - May 2026

Check out the May 2026 Power BI update to learn about new features.

Data Days 2026 coming soon!

Sign up to receive a private message when registration opens and key events begin.

New to Fabric Survey

If you have recently started exploring Fabric, we'd love to hear how it's going. Your feedback can help with product improvements.