FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

The Power BI Data Visualization World Championships is back! It's time to submit your entry. Live now!

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Dataflow develop solutions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dataflow develop solutions

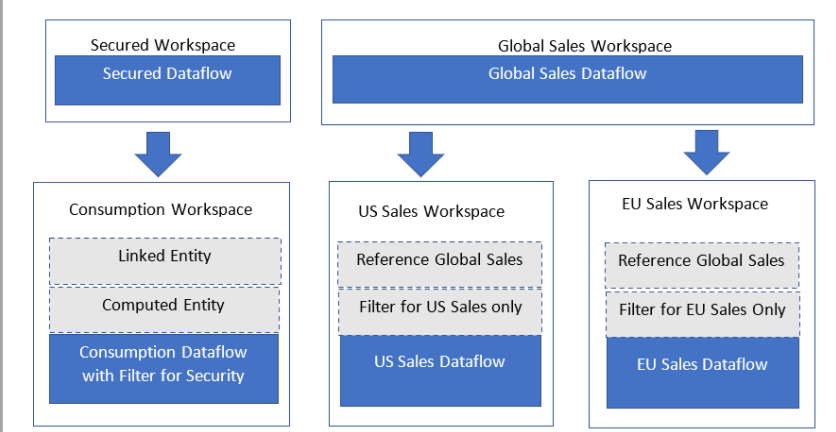

Suppose I have users spread to three region EU , APAC, US

so we have database in EU , and create a dataset using this database .

and users in APAC and US need to refer to this dataset , and make their own report ? How can we do that

what the solution to have best performance ? Power BI workflow can get data from another region ? Like in the following example.

Develop solutions with dataflows - Power BI | Microsoft Docs

in this way, each region has the same copy of the dataset ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Another consideration, that Dataflow may create use a Data Lake type. This is effective CSV for data and Json for Metadata. There is propute to create proper databases using the Large Dataset feature and toggling the Compute settings. Using an Azure based multi-region approach could be more effective and easier to control.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The connection from Power BI to Azure does not require a Data Gateway. It is optional. Data Gateway are normally for on-premise to Power BI data integrations. In this scenario, the data gateway is position in the on-premise data center - close to the data source. If you want to use a Data Gateway for Azure, I think you Data Gateway VM machine needs to be in the same region as the Azure SQL (note is will be a different machine and you will need cluster of them). I am not a Security expert, so you have to find out the pro and cons to the above - the accountant in me would question the extra cost.

As for the Non-EU user pulling to the data to their own capacity. I think there is question here about the multi-geo location for your Microsoft Tennet. It possible that all you users are effectively in the same core region. This may be the case for Power BI shared capacity. I think you will need to discuss with your Microsoft Account team to make you understand who and what is where.

If you do find you are loading EU located data to the Non-EU regions, make sure to use the Incremental Loads where possible to avoid lengthy run times. However, you may want to consider Azure-based regional data replication instead of relying on Power BI. It may give greater level of control of the data sync.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @globalmc

I hope you find the following useful.

It sound like you want to Import the same data into separate Premium Capacity in different regions for the benefit of the end users. If so, I have a couple of questions for you to consider:

- Are you expecting users to consume data outside of the Power BI Web Service? For example

- Excel Pivot Table

- Excel Cubemember/Cubevalue

- Are you split a P3 instance into 3 P1 instances?

I recommend locating your Premium Capacity close to the Data Source to import it quickly. You have suggested the Data is imported from Europe based server, so I would the capacity in Europe.

User from US and APAC won't experience slow performance, if using the Web Service reports. At this point, there is no data transfer, it is just the visual image of the data. There will be some lag, but real delay will be from the Query processing performed by PBI Service. Importantly, processing is not being done by the User's web browser.

This will leave you with single dataflows and single dataset to manage, so maintenance will be easier.

However, there are 2 situation when this processing is done by User:

- Large Data Exports from Server to Client Machine

- 1000s of Cubemember/Cubevalue requests

In both situations, non-european users will have to wait longer for data (bytes) to delivered from European. The Cubemember/Value is actually the worst performing. Essentially, each formula is a send and receive from Power BI Cube. If the send and receive take x number of millisecond to travel to and from Europe this delay needs to be multipled by 1000s of formulas.

If this is likely to be problem then your approach to Import the data into region dataset would work. I wondering if it needs to be imported into Dataflow and then to Dataset within each region. I would consider importing from the Europe Dataflow to the Regional Dataset would be as fast as Europe Dataflow to Regional Dataflow to Regional Dataset.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks for you valuable recommendations .

so in this case can non-EU user create a dataset in their own capacity which is importing EU Azure sql database?

are there any secure way to do that like ? recommend to use a power BI gateway ?

Helpful resources

Power BI Dataviz World Championships

The Power BI Data Visualization World Championships is back! It's time to submit your entry.