- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Re: Copy gen1 dataflow to lakehouse/warehouse

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Copy gen1 dataflow to lakehouse/warehouse

Hi all,

The data output option in gen2 dataflow is great, but it's missing incremental refresh (for now). So I need to use gen1 for our purpose.

How can I copy data from a gen1 dataflow into my lakehouse/warehouse?

Thanks.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

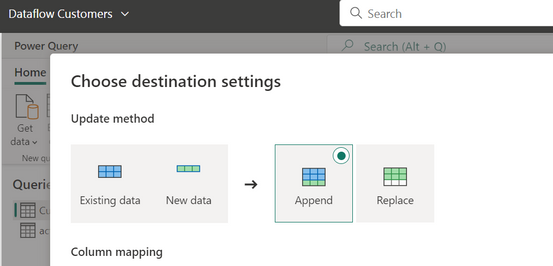

I know append is not incremental, but it's the tool to make it. If you create a dataflow that only brings yesterday data, it will append new data. The difference between append and incremental is that you need to think the logic behind the incremental and prepare your source for doing it. That's the way Gen2 can do it now.

Another alternative is using dataflow gen1 storing at Lake gen2. Then create a shortcut with fabric lakehouse.

Your alternative also work, creating first de gen1 then get it with gen2 to insert it in the lakehouse.

I hope that make sense.

Happy to help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi. Did you tried dataflow gen2? I haven't test it yet with that scenario, but when I was testing gen2 for simple solutions it ask at the end of the configuration if you want to replace the data or append. I know the doc says incremental refresh doesn't work, but it might be a different way to accomplish the objective.

I think it will let you make it incremental.

If you just can't, dataflow gen1 can't pick a destiny besides an Azure Data Lake gen2 (storage account with hirarchical setting). You can syn a workspace with the lake and dataflow gen1 will store in there. That would be the only way to let dataflow copy data for a destination like a lake. Otherwise dataflow has its own black box storage that can only be connected with Power Bi Desktop.

I hope that helps

Happy to help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ibarrau

Appending data is not the same as incremental refreshing, as it will append all data without checking existing data (I believe). The gen1 incr. refresh does that.

A workaround would be to add a timestamp in my query and later filter out the latest data. In some documentation, that is what the bronze (or landing) is supposed to do.

For now I've created a gen1 dataflow with incremental refresh + a gen2 dataflow with the automatic sink and run them sequentially in a DF pipeline. That works as intended, but is not optimal.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I know append is not incremental, but it's the tool to make it. If you create a dataflow that only brings yesterday data, it will append new data. The difference between append and incremental is that you need to think the logic behind the incremental and prepare your source for doing it. That's the way Gen2 can do it now.

Another alternative is using dataflow gen1 storing at Lake gen2. Then create a shortcut with fabric lakehouse.

Your alternative also work, creating first de gen1 then get it with gen2 to insert it in the lakehouse.

I hope that make sense.

Happy to help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a list of Customers in a Snowflake data source with a dimension table that has a Time Updated column. Is there a method using Data Flow Gen2 that would support SELECTing just the rows with a Time Updated after the last refresh and UPDATING just those rows that changed?