Get Fabric certified for FREE!

Don't miss your chance to take the Fabric Data Engineer (DP-700) exam on us!

Learn more- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

We've captured the moments from FabCon & SQLCon that everyone is talking about, and we are bringing them to the community, live and on-demand. Starts on April 14th. Register now

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Help! Nested Table Solution Creating Over a Billio...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Help! Nested Table Solution Creating Over a Billion Tables!

Hi everyone,

I work in education, and I’ve been given a table containing the dates a student has a certain context flag in our system. The flags are usually time-limited so a student could be at the school now that used to have one of these flags, but it has since expired and vice versa. Before now we’ve only been able to pull out the current context, but with the date range table, we can create a table that links to our calendar and student table that will allow us to use these conditions to filter the underlying fact tables in our model such as attendance and behaviour.

I initially tried combing the dates into a date range and pivoting that cell, but it turns out that some kids have multiple date ranges which we weren’t expecting. To solve for that we grouped the rows and then pivoted the date ranges whilst there were in nested tables then subsequently merged them onto a separate table that had the expanded enrolment dates for all students. I then passed through the data from that table into the nested table and tested it to see if it fell between the start and end date of the characteristic. I then evaluate all the rows in the nested table and if any of them are true return true for the table. I repeat that process for each of the context flags.

The Data File and PBIX are uploaded to google which shows all of this working but my problem is that whilst the approach I’ve tried in power query gets me to the output I’m looking for, the query potentially creates over a billion single-row tables which is ridiculous for what I’m trying to achieve and subsequently will not load.

If anyone can help optimise this approach so that it’s as efficient as possible that would be such a big help. Alternatively, the raw data is included along with our desired output in the excel file, so any other approaches are more than welcome.

Thanks in advance for your help. (Probably your level @ImkeF)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

While it's difficult to visualize these nested tables, I agree that you don't need to go all of the combining of the tables. You should even be able to use the full dataset, not just a year, if you just use your relationships and good DAX. 100M rows is nothing for a well written measure. I wish I could see some of your data...

--Nate

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @StanleyBlack ,

I've not gone through a full solution, but there's a few things you can quickly do to reduce the size of your final output:

1) Specify reasonable bounds on the data

1.a) You have people who enrolled 20 years ago or more - do you need to report on all of these, or just those enrolled in the last 5-10 years, for example?

1.b) Your end date for open enrolments is in the year 2286 - you should create an [endDateCapped] field with the following code to limit the future dates that need rows creating:

let

Date.Today = Date.From(DateTime.LocalNow())

in

if [END_DATE] > Date.Today then Date.Today else [END_DATE]

2) Don't affirm the negative in your ouput - in your example output you're trying to create a row for every student/date/criteria to say they're 'not' that thing. Your output should be limited to only students/dates/criteria that ARE that thing at any given point. The reporting output can be managed via DAX measures to identify when they're not that thing, you don't need to explicitly hold all that data.

3) This may completely negate 1) and 2) - you don't have to 'explode' your data into a single row for each student/date/criteria combination. You can just load the data in it's original form to the data model and use measures to check the dates to report on. For example, a measure similar to this could tell you how many students had medical conditions over time:

_noofWithMedCondOverTime =

VAR __cDate =

MAX(calendar[date])

VAR __noofMedCon =

CALCULATE(

DISTINCTCOUNT(contextTable[PERSON_UNIQUE_ID]),

contextTable[TAG_IDENTIFIER] = "DEMOGRAPHIC__STUDENT__HAS_MEDICAL_CONDITION",

KEEPFILTERS(__cDate >= contextTable[START_DATE]),

KEEPFILTERS(__cDate <= contextTable[END_DATE_CAPPED])

)

RETURN

IF (ISBLANK(__noofMedCon), BLANK(), __noofMedCon)

When applied to a visual that uses the [date] field of a DISCONNECTED/UNRELATED calendar table, DAX will do the date checks for you and count up the values for those that meet the criteria on that date.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you @BA_Pete ,

We took your approach with point one where we replaced dates in the cleaning phase:

#"Replaced End Date with today + 1 Year" = Table.ReplaceValue(

#"Extracted Date",

#date(2286, 11, 20),

Date.AddYears(Date.From(DateTime.LocalNow()), 1),

Replacer.ReplaceValue,

{"END_DATE"}

), We just opted for 1 year plus as we do some projections but nothing too fancy.

I'll circle back to 2.

For point 3 we do use the exact same approach when calculating student numbers actually:

Students on Roll =

VAR _LastDate = LASTDATE ( 'Calendar'[Date] )

RETURN

CALCULATE(

DISTINCTCOUNT ( 'Student Data'[Unique Student ID] ),

'Student Data'[DOA] <= _LastDate,

'Student Data'[Leaving date] > _LastDate || ISBLANK ( 'Student Data'[Leaving date] )

)

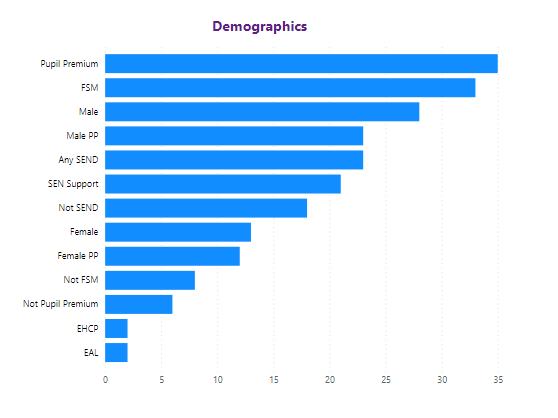

The issue, in this case, is not that we want to count those tags but rather use those tags to filter other tables. So we have an attendance table that just shows what attendance mark they got that session and we may want a user to select a slicer to change the data or have the various tags as an axis on a chart like this.

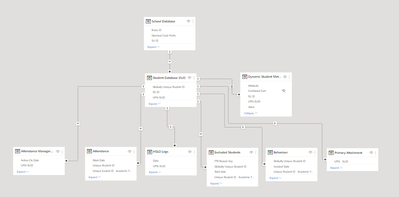

The way I currently do this is I create a single table but for the characteristics that a student has and then use a, and don't hate me, a bi-directional relationship to our student table which subsequently filters all our fact tables.

It looks like this in the model at the moment:

This is why I don't think point 3 will work except maybe as a calculation group but I'm not sure even about that. The other reason I'm trying to do it in Power Query is that I don't have access to the data warehouse source as that's owned and operated by a 3rd party and I think when applying that measure over a 100 million row table in DAX it's going to cripple performance.

That said how would you go about implementing option 2 bearing in mind the need to affirm the negatives in a visual like above?

Thanks again!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @StanleyBlack ,

I've gone back and forth over this and whittled my response down to a few key points which, hopefully, cover off all the questions at hand:

1) I think you should be aiming to achieve a Star Schema model, with distinct dimension tables for Student, Status Identifier (TAG_IDENTIFIER), and any other fields you want to filter on or apply to visual axes. This will give you control over all of your fact tables for this purpose.

2) Regarding the measure running on 100m rows - yes, you would likely experience performance issues here. However, would your data still be 100m rows if you didn't first 'explode' it into daterows?

Per the example file you sent (I'm assuming this is representative of your full current dataset), there's only 27k rows in Enrolments and 75k rows in Context. These kinds of numbers shouldn't cause PBI too much sweat.

Having said that, the 'exploded' table model is legitimate if you want to offload processing time to the refresh, but you should still be aiming for a star schema structure and may still run into performance issues. Using iterator functions would be the obvious scenario that springs to mind.

3) Regarding not affirming the negative - This really depends on whether the student status is always binary between the two options. Looking at your chart, it seems that this might not be the case, so difficult for me to understand the best way forward.

If the status IS always binary, then I would create generic measures for 'TOTAL number in relevant population', 'Number that ARE that thing' and 'Number that AREN'T that thing'. For your ARE measure, you would use the positive data available. For the AREN'T measure, I would essentially do TOTAL minus ARE.

With these measures, in reference to your visual, I would use both the ARE and AREN'T measures in the values field and change the visual to stacked chart, or stacked 100% chart. This will show total relevant population by bar length, broken down by ARE/AREN'T. This both solves the issue at hand and, arguably, improves reporting value to the end user as comparisons will be closer together to digest (for example, on your chart FSM and Not FSM are miles apart and therefore harder for the end user to relate).

Ultimately, I think that your 'Student Context' output from your example PBIX is the wrong way to present data to the model within Power BI. How the data is structured in your data warehouse is going to be very close, if not identical, to the optimum structure for PBI. I think accepting the optimum data structure essentially solves your original question re: nested tables, as well as the exhorbitant row counts.

If you still feel performance is unacceptable, then you can explore the 'exploded' model, but should still aim for normalised or SCD table structures and a star schema model structure.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

Helpful resources

New to Fabric Survey

If you have recently started exploring Fabric, we'd love to hear how it's going. Your feedback can help with product improvements.

Power BI DataViz World Championships - June 2026

A new Power BI DataViz World Championship is coming this June! Don't miss out on submitting your entry.

Join our Fabric User Panel

Share feedback directly with Fabric product managers, participate in targeted research studies and influence the Fabric roadmap.

| User | Count |

|---|---|

| 5 | |

| 4 | |

| 3 | |

| 3 | |

| 2 |

| User | Count |

|---|---|

| 9 | |

| 8 | |

| 7 | |

| 6 | |

| 5 |