FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

The Power BI Data Visualization World Championships is back! Get ahead of the game and start preparing now! Learn more

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Issue connecting the Data source

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Issue connecting the Data source

Following Data source error come due to "Shipping" Column in Data might be misplaced or removed from the original CSV file (onedrive).

{"error":{"code":"ModelRefresh_ShortMessage_ProcessingError","pbi.error":{"code":"ModelRefresh_ShortMessage_ProcessingError","parameters":{},"details":[{"code":"Message","detail":{"type":1,"value":"The column 'Shipping' of the table wasn't found."}}],"exceptionCulprit":1}}} Table: Master Sales History.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@trademobilemart, open the Edit/Transform Data query.

Right-click the open feed editor in the table. From the column list carefully remove the data-typed column.

If you change the name, rename it there.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @trademobilemart ,

Something to look out for is the fact that csv files are imported using a fixed number of columns: the number of columns in the source when first imported. This means that if your source csv has a column added to it, the furthest right column in the file is ignored. Not sure if this is your case, but very useful to know if you're dealing with csv sources nonetheless.

You can fix this so that the csv always picks up all columns in the source as follows:

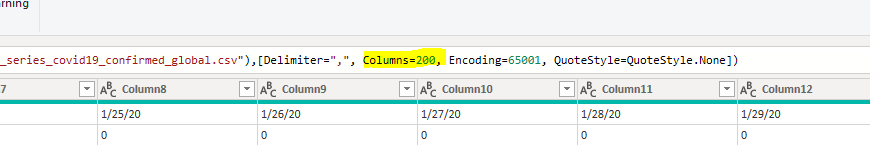

In Power Query, go to your source step and completely delete the column value argument here:

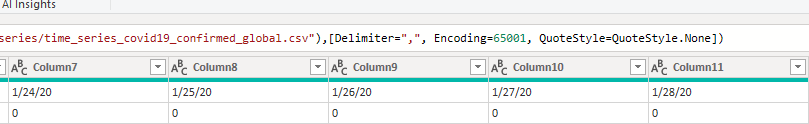

So that it now looks like this:

You will now always import all available columns as they are added to the source.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @trademobilemart ,

Something to look out for is the fact that csv files are imported using a fixed number of columns: the number of columns in the source when first imported. This means that if your source csv has a column added to it, the furthest right column in the file is ignored. Not sure if this is your case, but very useful to know if you're dealing with csv sources nonetheless.

You can fix this so that the csv always picks up all columns in the source as follows:

In Power Query, go to your source step and completely delete the column value argument here:

So that it now looks like this:

You will now always import all available columns as they are added to the source.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@trademobilemart, open the Edit/Transform Data query.

Right-click the open feed editor in the table. From the column list carefully remove the data-typed column.

If you change the name, rename it there.

Helpful resources

Power BI Dataviz World Championships

The Power BI Data Visualization World Championships is back! Get ahead of the game and start preparing now!

| User | Count |

|---|---|

| 37 | |

| 36 | |

| 33 | |

| 33 | |

| 29 |

| User | Count |

|---|---|

| 132 | |

| 86 | |

| 85 | |

| 68 | |

| 64 |