Huge last-minute discounts for FabCon Vienna from September 15-18, 2025

Supplies are limited. Contact info@espc.tech right away to save your spot before the conference sells out.

Get your discount- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- Quick Measures Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Score big with last-minute savings on the final tickets to FabCon Vienna. Secure your discount

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Convert DAX to M

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Convert DAX to M

Hi All,

I am trying to move all my calculated colums to the query editor which requires to translate DAX statement to M. I am not sure it is always possible. Here is an example where I can't make it work and your help would be much appreciate.

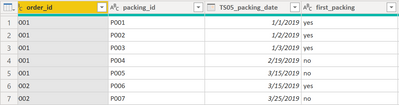

I have one order (order_id) that can be prepared in several sequences (packing_id) with different dates (TS_packing_date). I would like to evaluate the different dates and determine if it corresponds to the first packing. However, I accept a tolerance of +8 days.

The below formula looks for the earliest date (TS05_packing_date) of a specified order reference (order_id) and then compare the packing date (TS05_packing_date) with this earliest date and if [packing date - earliest date] is <=8 then "yes" else "no"

Here is my formula in DAX (it works fine)

first_packing =

IF (

'FACT Order'[TS05_packing_date]

<= CALCULATE (

MIN ( 'FACT Order'[TS05_packing_date] ) + 8;

ALLEXCEPT ( 'FACT Order'; 'FACT Order'[order_id] )

);

"YES";

"NO"

)

and here is the expected result

| order_id | packing_id | TS05_packing_date | first_packing |

| O001 | P001 | 01-janv | yes |

| O001 | P002 | 02-janv | yes |

| O001 | P003 | 03-janv | yes |

| O001 | P004 | 12-févr | no |

| O001 | P005 | 15-mars | no |

| O002 | P006 | 15-mars | yes |

| O002 | P007 | 25-mars | no |

How could I translate the iteration in M language? How could identidy the earliest date of a particular order reference?

Thanks in advance for your help.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @Arnault_ ,

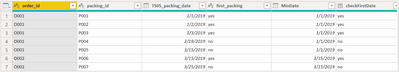

first I had to convert the values from the column TS05_packing_date into something that my Power Query was able to recognize as a date, this means my table looks like this:

Here is my solution that is based on two steps:

1. Determinig the min date per order_id

For this I grouped the table by order_id, but also added the aggregation function "All Rows"

After expanding the group my table looks like this:

2. Adding a custom column to check the date

I added a custom column "checkFirstDate" using this M expression:

if [TS05_packing_date] <= Date.AddDays([MinDate], 😎 then "yes" else "no"Finally the table looks like this, of course the the column "MinDate" can be deleted from the table as an additional step:

Hopefully this provides you with some ideas, how to tackle your requirement.

Regards,

Tom

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @Arnault_ ,

first I had to convert the values from the column TS05_packing_date into something that my Power Query was able to recognize as a date, this means my table looks like this:

Here is my solution that is based on two steps:

1. Determinig the min date per order_id

For this I grouped the table by order_id, but also added the aggregation function "All Rows"

After expanding the group my table looks like this:

2. Adding a custom column to check the date

I added a custom column "checkFirstDate" using this M expression:

if [TS05_packing_date] <= Date.AddDays([MinDate], 😎 then "yes" else "no"Finally the table looks like this, of course the the column "MinDate" can be deleted from the table as an additional step:

Hopefully this provides you with some ideas, how to tackle your requirement.

Regards,

Tom

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello. Can anyone help me convert the following dax code to M query? I have a dataset where "value" is cumulative for a year i.e. YTD. I need monthly value so that I can append this dataset to another table with the same variables where value is "monthly" and NOT YTD. Thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @TomMartens ,

Thank you very much for the explanations and the solution you suggested. It works.

Now I am wondering if it is better than my previous option where it was done in a calculated column. Indeed, the example I shared was a very simplified dataset. Doing the same (group by then expand) with a larger dataset takes much longer. I am not sure it is better in terms of performance. What would you suggest? Keeping it in a calculated column or doing in the query editor?

Thank you again for your great support.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @Arnault_ ,

the answer to your question "is it better than your DAX solution" (slightly rephrased), is simply this: it depends 🙂

To take a decision, it's absolutely necessary to read this article: https://www.sqlbi.com/articles/comparing-dax-calculated-columns-with-power-query-computed-columns/

As there are just 2 unique value "yes" and "no", all the downsides of DAX based columns can be neglected.

On the other hand, here is some M code that does not use the expand columns, instead it uses the M function Table.Join inline, maybe you want to test it with your larger table:

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45Wyi9KSS2Kz0xR0lEqSEzOzsxLh3BCgg1M42EiKYklqUCxtMyi4hKYoFKsTrSSv4GBIVAiAEIZGRha6hoY6oI5lanFaEqMkJUYYVVijKzEGKsSE7gSI11DSyAnLx9NhSlchbGuoSmKCiOICjN0FUjWQJWYIykxghkSCwA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type text) meta [Serialized.Text = true]) in type table [#"(blank)" = _t, #"(blank).1" = _t, #"(blank).2" = _t, #"(blank).3" = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"(blank)", type text}, {"(blank).1", type text}, {"(blank).2", type text}, {"(blank).3", type text}}),

#"Promoted Headers" = Table.PromoteHeaders(#"Changed Type", [PromoteAllScalars=true]),

#"Changed Type1" = Table.TransformColumnTypes(#"Promoted Headers",{{"order_id", type text}, {"packing_id", type text}, {"TS05_packing_date", type date}, {"first_packing", type text}}),

#"Grouped Rows" = Table.Group(#"Changed Type1", {"order_id"}, {{"MinDate", each List.Min([TS05_packing_date]), type date}}),

TableJoin = Table.Join(#"Changed Type1" , "order_id" , #"Grouped Rows" , "order_id" ),

#"Added Custom" = Table.AddColumn(TableJoin, "checkFirstDate", each if [TS05_packing_date] <= Date.AddDays([MinDate], 😎 then "yes" else "no"),

#"Changed Type2" = Table.TransformColumnTypes(#"Added Custom",{{"TS05_packing_date", Int64.Type}})

in

#"Changed Type2"

Regards,

Tom

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @TomMartens ?

I really appreciate the time you dedicate to mly request and thank you for the proposed solution.

Cheers

Helpful resources

| User | Count |

|---|---|

| 65 | |

| 60 | |

| 47 | |

| 33 | |

| 32 |

| User | Count |

|---|---|

| 85 | |

| 75 | |

| 56 | |

| 50 | |

| 45 |