FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- Quick Measures Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Calling all Data Engineers! Fabric Data Engineer (Exam DP-700) live sessions are back! Starting October 16th. Sign up.

- Power BI forums

- Forums

- Get Help with Power BI

- DAX Commands and Tips

- Performance Issue with AVERAGEX()

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Performance Issue with AVERAGEX()

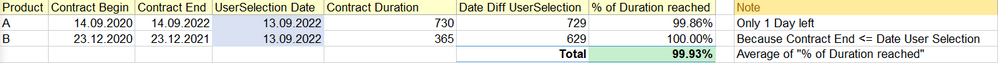

Hi community

I have a scenario where I'd like to calculate a % of reached duration.

Let's assume I have to Contracts A & B with a given contract duration ("Contract Duration").

The report user is now able to select a date from a slicer ("UserSelection Date").

Depending on this UserSelection Date I'd like to know what percentage of the "Contract Duration" was achieved.

- Product A: Because the date difference of the "Contract End" and "UserSelection Date" is only 1 day, 99.86% was achieved yet.

- Product B: Because the "Contract End" is before "UserSelection Date", already 100% were achieved.

- When there would be a product with a "Contract Start" after "UserSelection Date", the % of duration reached would be 0%.

The Total of both products is nearly 100%.

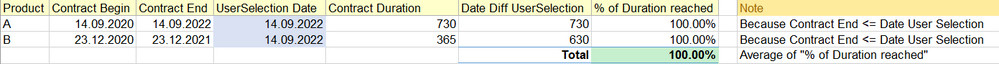

When the user changes the "UserSelection Date" to 14.09.2022 or later, the Total is 100%:

Now the calculation of the single rows is simple ("% of Duration reached Step 1"--> Date Diff UserSelection / Contract Duration)

But the calcuation of the Total differs, because you cannot take the sums of this two values.

That's why I'm calculating "% of Duration reached Step 2" with the AVERAGEX of the "% of Duration reached Step 1". This will return the correct value, but is extremly slow.

I have only around 2 Million rows and I'm using the import mode - but the calculation of the Step 2 takes around 1 Minute.

Additional information: "Product" is just one sample dimension, the formulas should work for other dimensions as well (i.e. vendor).

UserSelection Date = MAX(Calendar[Date])

Contract Duration =

CALCULATE(

SUMX(

myTable,

DATEDIFF ( myTable[Contract Begin], myTable[Contract End], DAY )

)

)

Date Diff UserSelection =

CALCULATE(

SUMX(

myTable,

DATEDIFF ( myTable[Contract Begin], [UserSelection Date], DAY )

)

)

% of Duration reached Step 1 =

IF(

MAX(myTable[Contract End]) < [UserSelection Date],

1,

IF(

MAX(myTable[Contract Begin]) > [UserSelection Date],

0,

[Date Diff UserSelection] / [Contract Duration]

)

)

% of Duration reached Step 2 =

AVERAGEX(

myTable,

[% of Duration reached Step 1]

)

Are there other ways to get the correct total value?

How can I improve the performance of this calculation?

Thank you very much for your support!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

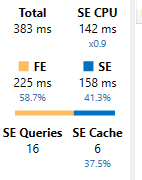

I tried to recreate this with some test data that had 78k rows, so significantly less than your dataset.

Here's the server timings for your measures on this dataset:

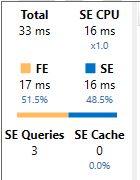

I condensed it all into a single measure...

Avg Duration =

VAR _UserValue = MAX('User Selection'[Date])

RETURN

AVERAGEX(

myTable,

VAR _Num = MIN(_UserValue, myTable[Contract End]) - MIN(_UserValue, myTable[Contract Begin])

VAR _Denom = myTable[Contract End] - myTable[Contract Begin]

RETURN

DIVIDE(_Num, _Denom)

)which gives these server timings. 16 storage engine queries down to 3 and a much faster time (for my test data anyway).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @PaulOlding

Thank you very much for your support!

I've tried your formula and the performance is indeed way better.

Unfortunately I've made a mistake in the definition and the final value should be different.

Let me try to explain the expected results:

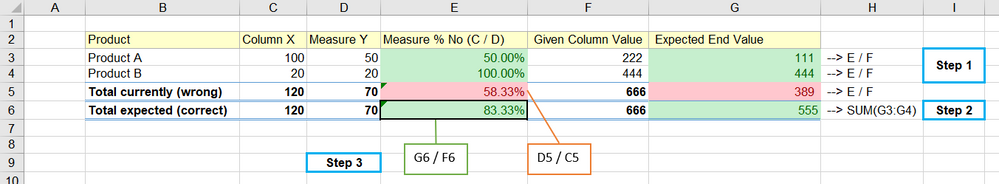

- Step 1: I want to calculate column G based on E and F on the most granular level

- Step 2: For the total of column G (row 5) this calculation won't be right, so I need the sum.

- Step 3: I want to calculate the total of column E, which can't be the same formula than the one on the most granular level (C divided by D) or the average (like in my original post), but I need to divide G6 by F6.

So I need two values which will be different on row level and on total level (G6 & E6) - I guess, there will be might be more than one calculation needed 🙂

Thank you and best regards

Tom

Helpful resources

FabCon Global Hackathon

Join the Fabric FabCon Global Hackathon—running virtually through Nov 3. Open to all skill levels. $10,000 in prizes!

Power BI Monthly Update - October 2025

Check out the October 2025 Power BI update to learn about new features.

| User | Count |

|---|---|

| 10 | |

| 8 | |

| 6 | |

| 4 | |

| 3 |