FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Get Fabric Certified for FREE during Fabric Data Days. Don't miss your chance! Request now

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Same Dimensions, multiple Fact tables, differe...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Same Dimensions, multiple Fact tables, different measures, millions of rows

Hello,

I've been creating/updating/enhansing reports for years, even played a bit with dimensional modeling on very simple cases ( up to 10k rows), but in terms of real dimensional modeling with lots of rows... I'm a complete rookie. I've checked various forums, tried to do a rapide go over the Theory, but ... I'm a bit hard pressed by time and I hope to get some quality/valuable advices from those who are facing such cases on daily basis

My case :

- two different fact tables, with the same dimensions, but different number of measures in each, let's say 10 FKs each ( including Date dimension) and respectively 10 and 15 measures

- 10 commun dimensions

- There will be 2 years worth of data, current year and previous year data based on Fiscal period, where

- Fact Table A will have approx 5-6 millions rows/period

- Fact Table B will have approx 7-8 millions rows/period

- With 24 periods at the end of the year and the only option to use *.csv files as input for Power BI, I'm a bit desparate to fine optimal solution. Needless to say, that data quality is priority number One and performance number Two

- Good news is I have an ETL tool, that can assist me with any data crunching I have to do

Requirement :

Being able to combine data from both fact tables in one visual and compute new measures based on both fact tables.

The way I see it, there are two options :

1) Load the 10 dimensions + 2 fact tables in Power BI and make the Star Schema. What I'm not clear is the correct way to create the schema i.e.

- What I'd do is to connect the two fact tables with all 10 dimensions, but... not sure this is correct.

- something tells me it is not 😞

2) What was done by my predecessor is to join the two facts by the 10 FKs and create one single Fact table. Now, this was working before, as the expected data load was around 15-20 millions rows for 2 years per fact table. However requirements has changed recently and now I'm facing potentially 180-200millions or rows for 2 years per fact table.

Finally the question - what do you suggest I should do ? Should I

1) continue with the creation of 1 fact table

or

2) keep the 2 fact tables separate and rely on Power BI modeling ? If this is the way to go, how should link the tables ?

Best regards,

Pavel

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

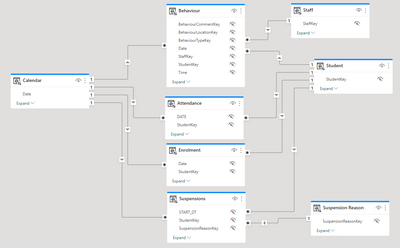

I think you've got exactly the right idea! Give it a go. Every data model is different but for example a normal pattern I use in education has a number of fact tables will shared dimensions:

We often use measures that require calculations from more than one table!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

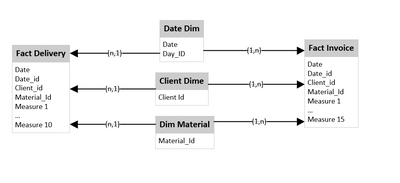

There are several ways of doing this. Have a look at the five most common:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Given your requirements, there are a few potential approaches to consider.

Option 1: Create a star schema with both fact tables

Creating a star schema with both fact tables and shared dimensions is a valid approach. You should connect the fact tables to each dimension, creating a bridge table where necessary. This approach can be more flexible than joining the fact tables together, allowing you to analyze each fact table separately and combine them as needed.

You could also consider partitioning the fact tables by date to improve query performance. This would allow you to query only the relevant partitions for a given time period, rather than scanning the entire table.

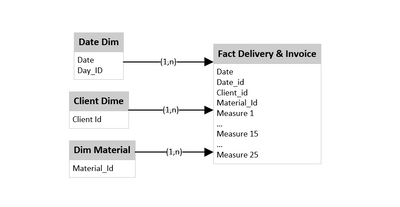

Option 2: Join the fact tables into a single table

Joining the fact tables together into a single table is also a valid approach, but it may not be the most efficient option for your data volume. Combining the tables will result in a larger table, which can be slower to query and consume more memory.

If you choose to join the tables, you should carefully consider the join conditions and ensure that the resulting table is still at the appropriate level of granularity for your analysis.

Recommendation

Given the large data volume you're dealing with, we would recommend creating a star schema with both fact tables and shared dimensions. This approach allows you to analyze each fact table separately and combine them as needed. You should also consider partitioning the fact tables by date to improve query performance.

You should test both approaches on a small subset of your data to evaluate performance and ensure that the resulting schema meets your reporting needs. Additionally, you should work with a data modeling expert to ensure that the schema is properly designed and optimized for your specific requirements.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Ben,

Thank you for your help !

The fact tables contains data on respectively what was delivered and what was invoiced. Data is on client, product and daily level.

I might be able to lower the number of conformed dimensions by using snowflake schema, but I'd like to avoid that as

(1) I saw several articles explaining that Power BI is more favorable performance wise to Star then to Snowflake schema,

(2) dimensions might not be as conformed as they appear ( for example a client might have received its goods at day X, but it will be invoiced on day X+Y, and such small details that at the create difference).

(3) Also most of the dimensions are actually snowflakes of the client dimension. So I prefer to keep the FKs of the snowflakes as separated dimensions and try to compensate with the ETL when/if possible instead of relying on how up to date a possible client dimensions could be ( the fact tables are coming from two different cubes, so even if the dimensions are conformed and the same, I can never be sure that they are both synced at the same time)

If I draw an overall simplified schema, what I guess I should go for is something like this?

Of course at later moment, it might appear that even if the dimensions in the two fact tables/cubes are the same, they are maintained differently, and thus I need to maintain two sets of same dimensions. However today, the assumption is that they are the same, so what I currently have is one very big fat Fact table ( that I'd like to dismantle)

The ETL tool takes 30minutes Today to make the join of the two fact tables and it will be over 1,5h when I have 24 periods instead of 8.

Any additional advice(s) you might have will be greatly appretiated.

Best regards,

Pavel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The diagram you provide with the two fact tables and 3 dimensions is exactly the way to go. Certainly try and avoid snowflakes.

What are you worried about not working in that setup?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well my main concen is having just some theoritical knowledge ( enphasis on some) without practical experience with such volumes. And this is the first time I'm dealing with combining fact tables into one ( right now I'm trying to fixe someone else's design and would like to avoid making things worse than before) .

Aside from doing it for the 1st time and the amount of data, the main concern is wheter calculated columns based on measures from both fact tables will work correctly ( I guess this is why the person before me went for joining the two tables)

For example -

Calculate (Sum( Fact Delivery[Measure 1] - Sum ( Fact Invoice[Measure 17])

KEEPFILTERS('DimDate'[Period]=5))

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think you've got exactly the right idea! Give it a go. Every data model is different but for example a normal pattern I use in education has a number of fact tables will shared dimensions:

We often use measures that require calculations from more than one table!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Ben,

Thank you for your help ! Yours and Adamboer's confirmations are what I was hoping for.

Hopefully it will work 🙂

Best regards,

Pavel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If the two fact tables contain different "facts" then I'd keep them separate and link them to your common (conformed) dimensions exactly ad you describe. It would though help if you described what is contained in the two fact tables. This is sometimes called a galaxy schema but I prefer to just think of it as multiple star schema. As long as the dimensions are common it'll work perfectly.

Helpful resources

Power BI Monthly Update - November 2025

Check out the November 2025 Power BI update to learn about new features.

Fabric Data Days

Advance your Data & AI career with 50 days of live learning, contests, hands-on challenges, study groups & certifications and more!