A new Data Days event is coming soon!

This time we’re going bigger than ever. Fabric, Power BI, SQL, AI and more. We're covering it all. You won't want to miss it.

Learn more- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

Level up your Power BI skills this month - build one visual each week and tell better stories with data! Get started

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Performance : calculating Moving average

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Performance : calculating Moving average

Hi Everyone,

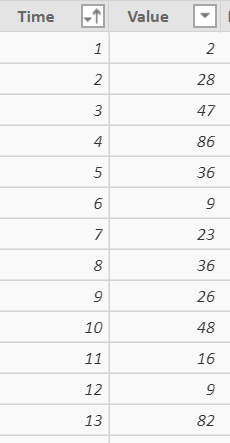

I am facing some performance issues when i try to calculate moving average on data. I do not have time dimension but i have some indexes that represent time (1,2,3,...) and some value. So you could test that with a simple table with two columns, 1 for indexes, 1 for values. What i want to perform is a MA with 10 indexes back and 10 forward. So i use this formula :

MovingAverageMeasure2 =

AVERAGEX(

FILTER(ALL(DataForMA);

AND(

DataForMA[Time] -10<= MAX(DataForMA[Time]);

DataForMA[Time] +10>= MAX(DataForMA[Time])));

DataForMA[Value])

This works but i am badly surprised of the poor performance of that calculation. Here, i ve testes it on a table that has only 4000 rows but if i increase that number to 20000 for example, refresh time then become terrible. (we talk about minutes)

I ve tried the same calculations in T*****, i ve got no problems, refresh is nearly immediate.

The only solution i ve found is to precalculate the MA Points in a new Dax column and the plot the value.

(However, Preloading time is still long and you can't for example modify the number of indexes back or forward with a parameter)

Mov_Average_Column=

var DynamicTable =

CALCULATETABLE(

DataForMA;

ALL(DataForMA);

AND(

DataForMA[Time] -10<= EARLIER(DataForMA[Time]);

DataForMA[Time] +10>= EARLIER(DataForMA[Time])))

return

AVERAGEX(DynamicTable; DataForMA[Value])So, could you tell me if i am missing something? Should I try other formulas ?

Thanks in advance for your answers 🙂

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous -

The query is slow because there are no grouping indexes. Without index(es) to group the rows, it will iterate the entire table for each row.

The solution that I tried earlier didn't group them into big enough groups. You will need to optimize the exact number and size of grouping, but the concept is this:

//Create the Table

Moving Average DAX =

ADDCOLUMNS(

GENERATESERIES(1,20000,1),

"MyValue",

CEILING(RAND()*100,1)

)

//Rename the first column idx, for clarity

//Calculated Columns:

//This consists of a pair of groups at each level, to cover forward and backward. You can adjust the number and size of groups, but the smallest group needs to cover the necessary range.

GroupLarge1 = rounddown(DIVIDE('Moving Average DAX'[idx], 500),0)

GroupLarge2 = rounddown(DIVIDE('Moving Average DAX'[idx] + 250, 500),0)

GroupSmall1 = rounddown(DIVIDE('Moving Average DAX'[idx], 20),0)

GroupSmall2 = rounddown(DIVIDE('Moving Average DAX'[idx] + 10, 20),0)

//Measure:

//

Moving Average =

var cur_idx = MAX('Moving Average DAX'[idx])

var cur_grp1 = MAX('Moving Average DAX'[GroupSmall1])

var cur_grp2 = MAX('Moving Average DAX'[GroupSmall2])

var mytablarge = DISTINCT(

UNION(

ALLEXCEPT('Moving Average DAX', 'Moving Average DAX'[GroupLarge1]),

ALLEXCEPT('Moving Average DAX', 'Moving Average DAX'[GroupLarge2])

)

)

//If you want to add more grouping levels, you'll need to create a pair of variables for each level and create a table variable for each level

var mytabsmall = DISTINCT(

UNION(

FILTER(mytablarge, [GroupSmall1] = cur_grp1),

FILTER(mytablarge, [GroupSmall2] = cur_grp2)

)

)

return AVERAGEX(

FILTER(

mytabsmall,

AND([idx] -10<= cur_idx , [idx] +10>= cur_idx)

),

[MyValue]

)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous -

Try this:

MovingAverageMeasure2 =

var cur_time = MAX(DataForMA[Time])

CALCULATE(

AVERAGE(DataForMA[Value]);

FILTER(

ALL(DataForMA);

AND(

DataForMA[Time] -10<= cur_time;

DataForMA[Time] +10>= cur_time

)

)

);Hope this helps,

Nathan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Nathan,

I had also tried a version of the measure with a calculate instead of an averageX (same as yours actually). This is also working but it still has terrible performance issues. And assigning the MAX to a variable does not improve the process 😞

But Thank you for you answer 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous

There is a problem because the entire table is iterated for each row. One possible remedy:

1. Add a couple of grouping indexes with Calculated Columns:

TimeGroup1 = rounddown(DateForMA[Time] + 15/ 20,0) TimeGroup2 = rounddown(DateForMA[Time] + 5/ 20,0)

2. Instead of AVERAGE calculation, find the sum and then count of all that are in at least one of the 2 groupings, and then divide the sum by the count:

MovingAverageMeasure2 = var cur_time = MAX(DataForMA[Time]) var sum_values = CALCULATE( SUM(DataForMA[Value]); ALLEXCEPT(DataForMA[TimeGroup1]) ) + CALCULATE( SUM(DataForMA[Value]); ALLEXCEPT(DataForMA[TimeGroup2]) ) var count_values = CALCULATE( COUNT(DataForMA[Value]); ALLEXCEPT(DataForMA[TimeGroup1]) ) + CALCULATE( COUNT(DataForMA[Value]); ALLEXCEPT(DataForMA[TimeGroup2]) ) return DIVIDE(sum_values, count_values)

Hope this helps,

Nathan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous -

Just realized this was wrong...

The calculated columns are good. But for the calculation, you need to get the distinct list of relevant times and then do your original AverageX.

Something like this:

var cur_time = MAX(DataForMA[Time])

var mytab = UNION(ALLEXCEPT(DataForMA; DataForMA[TimeGroup1]); ALLEXCEPT(DataForMA; DataForMA[TimeGroup2])

var mytabdistinct = summarize(mytab;[Time];"Value";max([Value]))

AVERAGEX(

[Value];

FILTER(

mytabdistinct ;

AND([Time] -10<= cur_time ; [Time] +10>= cur_time)

)

)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

One more try:

MovingAverageMeasure2 =

var cur_time = MAX(DataForMA[Time])

var mytab = UNION(

ALLEXCEPT(DataForMA; DataForMA[TimeGroup1]);

ALLEXCEPT(DataForMA; DataForMA[TimeGroup2])

)

var mytabdistinct = summarize(mytab;[Time];"Value";max([Value]))

AVERAGEX(

FILTER(

mytabdistinct ;

AND([Time] -10<= cur_time ; [Time] +10>= cur_time)

);

[Value]

)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Nathan,

I ve tested your solution, however performance is still very poor.

Thanks for your answers anyway 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What does your DataForMA Table look like? Is it a Dimension / Fact / or Somewhere in between?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Nick,

You can test that with a simple table with 2 columns.

You can generate a sample by creating a dax table and using the following code ;

Data = ADDCOLUMNS( GENERATESERIES(1;20000;1); "Number";CEILING(RAND()*100;1))

just rename the first column index or time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was more wondering about the # of rows, columns, and the cardinality.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well, Just 1 table and 20000 rows, so this is really a simple structure 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is there any way to get those # of rows lower? If you use Averagex and then Filter you are iterating it twice, which is why you seeing performance degradation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you tried using summarize()? Not at my PBI computer so cant test it out but you should be able to do a AverageX over a sumarized table. The summariezed table would still be used for every row but is much smaller the your entire dataset.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

the table on which is performed the average depends on each row from the source table. And in my humble opinion , this moving subset table has to be filtered, not summarized, as it s not an aggregated table. But i Might be wrong 🙂

@Anonymous

Hi,

Well, the data has to stay at that granularity. (Basically, it is what my client wanted). So source table cannot be aggregated or filtered.

As i ve said in my original post, i am surprised that such a powerful language as DAX can't handle this kind of problem otherwise than by solutions consisting in the reduction of the source table. (After all 20000 rows isn't that much).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous -

The query is slow because there are no grouping indexes. Without index(es) to group the rows, it will iterate the entire table for each row.

The solution that I tried earlier didn't group them into big enough groups. You will need to optimize the exact number and size of grouping, but the concept is this:

//Create the Table

Moving Average DAX =

ADDCOLUMNS(

GENERATESERIES(1,20000,1),

"MyValue",

CEILING(RAND()*100,1)

)

//Rename the first column idx, for clarity

//Calculated Columns:

//This consists of a pair of groups at each level, to cover forward and backward. You can adjust the number and size of groups, but the smallest group needs to cover the necessary range.

GroupLarge1 = rounddown(DIVIDE('Moving Average DAX'[idx], 500),0)

GroupLarge2 = rounddown(DIVIDE('Moving Average DAX'[idx] + 250, 500),0)

GroupSmall1 = rounddown(DIVIDE('Moving Average DAX'[idx], 20),0)

GroupSmall2 = rounddown(DIVIDE('Moving Average DAX'[idx] + 10, 20),0)

//Measure:

//

Moving Average =

var cur_idx = MAX('Moving Average DAX'[idx])

var cur_grp1 = MAX('Moving Average DAX'[GroupSmall1])

var cur_grp2 = MAX('Moving Average DAX'[GroupSmall2])

var mytablarge = DISTINCT(

UNION(

ALLEXCEPT('Moving Average DAX', 'Moving Average DAX'[GroupLarge1]),

ALLEXCEPT('Moving Average DAX', 'Moving Average DAX'[GroupLarge2])

)

)

//If you want to add more grouping levels, you'll need to create a pair of variables for each level and create a table variable for each level

var mytabsmall = DISTINCT(

UNION(

FILTER(mytablarge, [GroupSmall1] = cur_grp1),

FILTER(mytablarge, [GroupSmall2] = cur_grp2)

)

)

return AVERAGEX(

FILTER(

mytabsmall,

AND([idx] -10<= cur_idx , [idx] +10>= cur_idx)

),

[MyValue]

)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous ,

I got the idea, this is really nice 🙂

Thanks for your time and explanations !

Piu

Helpful resources

Power BI Monthly Update - April 2026

Check out the April 2026 Power BI update to learn about new features.

Data Days 2026 coming soon!

Sign up to receive a private message when registration opens and key events begin.

New to Fabric Survey

If you have recently started exploring Fabric, we'd love to hear how it's going. Your feedback can help with product improvements.

| User | Count |

|---|---|

| 35 | |

| 32 | |

| 25 | |

| 22 | |

| 18 |

| User | Count |

|---|---|

| 65 | |

| 35 | |

| 32 | |

| 25 | |

| 23 |