- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- DAX to remove rows that are similar but comparing ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DAX to remove rows that are similar but comparing different columns/rows

Hi Community,

hoping you can assist please.

I am trying to remove rows from my table using DAX based on what are considered as duplicate.

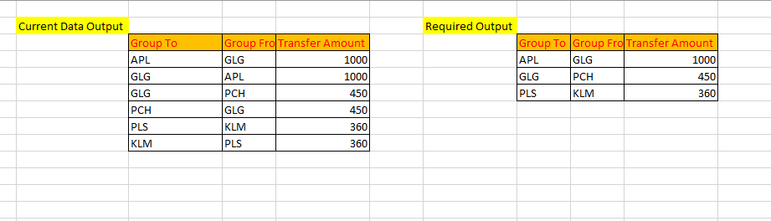

For example from the screenshot below APL to GLG and GLG to APL is the same transfer i need it to only show one row.

Any help would be appreciated

| Group To | Group From | Transfer Amount |

| APL | GLG | 1000 |

| GLG | APL | 1000 |

| GLG | PCH | 450 |

| PCH | GLG | 450 |

| PLS | KLM | 360 |

| KLM | PLS | 360 |

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi community,

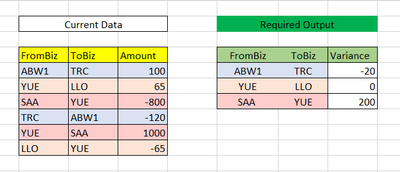

hoping you can assist. I am trying to create a variance to show the balance between inter related entities.

Please see below of my current data and my required output

I have provided any updated screenshot as this hopefully will clarify the current data output and issue.

Thanks

@Ahmed

@Jihwan_Kim

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @databot_kd ,

If I understand correctly, the issue is that you want to remove rows. Please try the following methods and check if they can solve your problem:

1.Create the simple table.

2.Create a calculated column that generate a unique identifier for each transfer.

Column =

VAR GroupFrom = [FromBiz]

VAR GroupTo = [ToBiz]

VAR MinGroup = MIN(GroupFrom, GroupTo)

VAR MaxGroup = MAX(GroupFrom, GroupTo)

RETURN

CONCATENATE(MinGroup, MaxGroup)3.Create a new table Table 2 that removes duplicates based on the column.

Table 2 =

SUMMARIZE(

'Table',

'Table'[Column],

"FromBiz", MINX(FILTER('Table', 'Table'[Column] = EARLIER('Table'[Column])), [FromBiz]),

"ToBiz", MAXX(FILTER('Table', 'Table'[Column] = EARLIER('Table'[Column])), [ToBiz]),

"Amount", SUMX(FILTER('Table', 'Table'[Column] = EARLIER('Table'[Column])), [Amount])

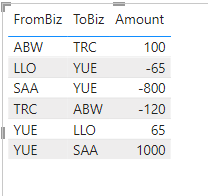

)4.The result is shown below.

Looking forward to your reply.

Best Regards,

Wisdom Wu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi community,

hoping you can assist. I am trying to create a variance to show the balance between inter related entities.

Please see below of my current data and my required output

I have provided any updated screenshot as this hopefully will clarify the current data output and issue.

Thanks

@Ahmed

@Jihwan_Kim

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @databot_kd ,

If I understand correctly, the issue is that you want to remove rows. Please try the following methods and check if they can solve your problem:

1.Create the simple table.

2.Create a calculated column that generate a unique identifier for each transfer.

Column =

VAR GroupFrom = [FromBiz]

VAR GroupTo = [ToBiz]

VAR MinGroup = MIN(GroupFrom, GroupTo)

VAR MaxGroup = MAX(GroupFrom, GroupTo)

RETURN

CONCATENATE(MinGroup, MaxGroup)3.Create a new table Table 2 that removes duplicates based on the column.

Table 2 =

SUMMARIZE(

'Table',

'Table'[Column],

"FromBiz", MINX(FILTER('Table', 'Table'[Column] = EARLIER('Table'[Column])), [FromBiz]),

"ToBiz", MAXX(FILTER('Table', 'Table'[Column] = EARLIER('Table'[Column])), [ToBiz]),

"Amount", SUMX(FILTER('Table', 'Table'[Column] = EARLIER('Table'[Column])), [Amount])

)4.The result is shown below.

Looking forward to your reply.

Best Regards,

Wisdom Wu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

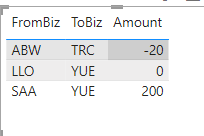

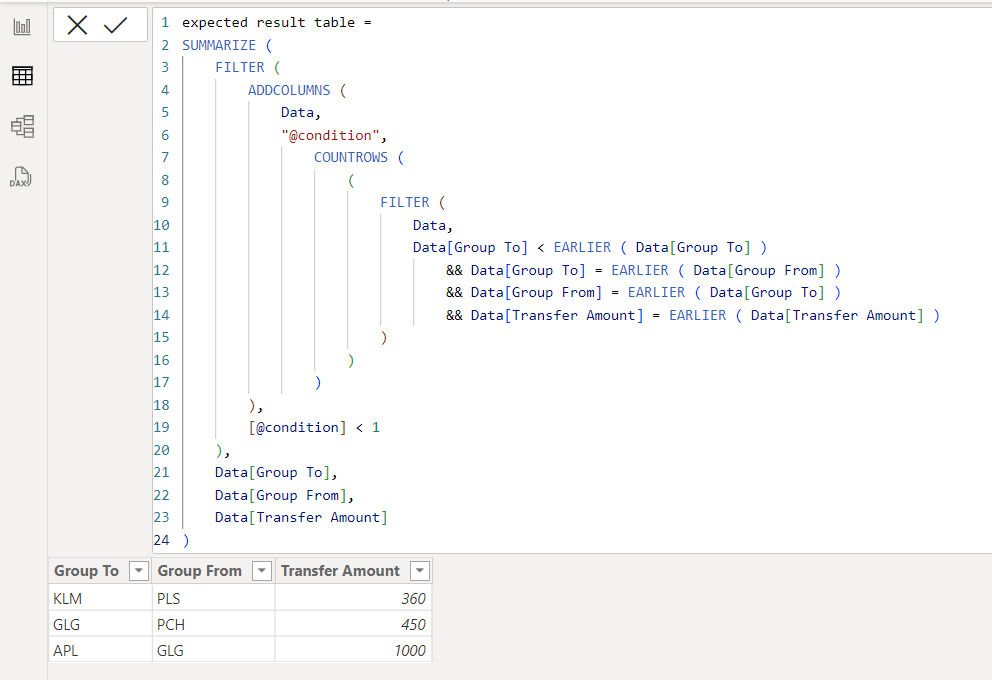

I am not sure if I understood your question correctly, but please check the below picture and the attached pbix file whether it suits your requirement.

If this post helps, then please consider accepting it as the solution to help other members find it faster, and give a big thumbs up.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Jihwan_Kim , thanks for asssiting.

Tried this had a issues when using EARLIER for the amount as the amount is a measure coming from another table. Apologies i should have mentioned that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@databot_kd , Create a measure like this use with Group To , Group From in visual

measure =Sumx(summarize(Table, Table[Group To],Table[Group From], Table[Transfer Amount]),Table[Transfer Amount])

Microsoft Power BI Learning Resources, 2023 !!

Learn Power BI - Full Course with Dec-2022, with Window, Index, Offset, 100+ Topics !!

Did I answer your question? Mark my post as a solution! Appreciate your Kudos !! Proud to be a Super User! !!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @amitchandak ,

Thanks for assisting. I tried the measure you provided but i get the same result as before.

Not sure if this is cause the amount is coming from a different table. Would you have any other thoughts on where i am going wrong?

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 112 | |

| 100 | |

| 76 | |

| 64 | |

| 63 |

| User | Count |

|---|---|

| 140 | |

| 105 | |

| 102 | |

| 81 | |

| 67 |