- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Architecture ideas for partitioned Parquet files i...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Architecture ideas for partitioned Parquet files in ADLS

Hi all,

I'm curiuos to find the best way to work with multiple parquet files that are partitioned by day.

As our tables grew to big we had to move away from SQL DB and decided to let our databricks notebooks write parquet files to ADLS. Our first idea was then to create virtual tables with poly base in our existing Azure SQL and read with PowerBI from those, but unfortunately it does not support Poly Base.

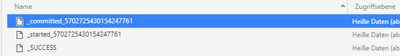

I also tried to directly read those parquet files with the new PowerBI connector, but that did not work because following issues: In each folder are also the "started", "commited" and "Success" Files created by the Spark are inside and with those the Parquet Files can't be combined.

So I think there should be something between the parquets and power BI. With the sql db we also created views to limit the data size (eg. data from past two years) dependend on dashboard needs.

I suppose I'm not the only one with that "problem". Could you please share best practices for that?

Could be any technology or method.

Good to know: we have an Azure Pipeline with Datafactory and Databricks in the back.

Many thanks,

Massimo

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @moritzmassimo ,

I noticed that Parquet connector is now available since September this year,if this case can be simplified to the connection between Parquet and Power bi,then you may try to use Parquet connector,below is the related reference:

https://parquet.apache.org/documentation/latest/

Best Regards,

Kelly

Did I answer your question? Mark my reply as a solution!

Helpful resources

| User | Count |

|---|---|

| 101 | |

| 90 | |

| 80 | |

| 71 | |

| 70 |

| User | Count |

|---|---|

| 114 | |

| 98 | |

| 97 | |

| 73 | |

| 72 |