Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Data Factory forums

- Forums

- Get Help with Data Factory

- Data Pipelines

- Re: Pipeline Activity with multiple conditions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Pipeline Activity with multiple conditions

Hi folks,

i have a real basic question that I am not finding the answer to:

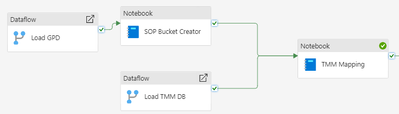

Will the "TMM Mapping " Notebook in the example above wait for both the "SOP Bucket Creator" and "Load TMM DB" before it starts to run ?

Thans for your help!

Joachim

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JoachimSA

Thanks for using Fabric Community.

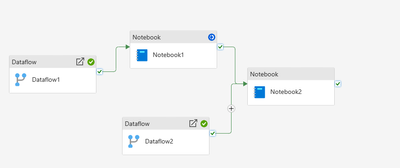

I have created a repro of your scenario:

1) Inititally the Dataflow1 and Dataflow2 will start running.

2) After this the Notebook1 starts running.

3) If both Notebook1 and Dataflow2 run successfully , then the Notebook2 starts running.

Hope this helps. Please let me know if you have any further questions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Perfect thank you!

Just to confirm: all activities without a condition will be triggered on pipeline start ?

I have a scenario where i would want to switch off certain parts of the pipeline depending on which input data was changed in the background so I do not need to run through everything for every small update of some side info.

are there any ressources on how one would switch off certain branches of the tree of activities ?

If an activity is switched off but is still connected to the following node it will be taken out of the condition for the following node to run right ?

I just wasn able to find very good documentation on those very basic questions...do you have a hint for me ?

Thanks and best regards,

Joachim

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you plz provide a normal PPT/ visual representaion of what you want to achieve?

To ans to your query, yes

all activities without any dependency would run paralley on pipeline start.

And you can switch / turn off activities based on multiple approaches like leveraging IF or Switch activity or via dependencies.

A visual representation of your ask would help us help you better

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Nandan,

so depending on which inputs changed i would like to easily exclude each of the 3 Highlighted Blocks individually from updating.

As you can See, at the moment i just deactivated some of them. Would that be the way to go ? Longer branches get increasingly cumbersome to switch on and of then.

Also: Each activity write a deltatable at the end which is the used by the next one. Is that the optimal way when I work in a Lakehouse ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can create a parameter at the pipeline level and then leverage IF activity to either enable that flows or ignore those and procced.

So rather than just disbaling the activities, you can pass the parameter at run time to either enable or skip the flows

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks, i think i could figure something out 🙂

Are there any good examples / best practices / tutorials on this ? I feel like most stuff I find online are not covering these kinds of things

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JoachimSA

Thanks for using Fabric Community.

I have created a repro of your scenario:

1) Inititally the Dataflow1 and Dataflow2 will start running.

2) After this the Notebook1 starts running.

3) If both Notebook1 and Dataflow2 run successfully , then the Notebook2 starts running.

Hope this helps. Please let me know if you have any further questions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes, it would wait for both the previous activities to be completed and that too succesfully completed before it starts its execution.

The dependencies in data pipe lines acts as an AND expression.

Reference : https://datasharkx.wordpress.com/2024/02/17/error-logging-and-the-art-of-avoiding-redundant-activiti...

Helpful resources

Fabric Monthly Update - April 2024

Check out the April 2024 Fabric update to learn about new features.

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

| User | Count |

|---|---|

| 6 | |

| 5 | |

| 2 | |

| 2 | |

| 2 |