Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Data Factory forums

- Forums

- Get Help with Data Factory

- Data Pipelines

- Connect ADF Copy Data to Fabric Data Lake House

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Connect ADF Copy Data to Fabric Data Lake House

Hi,

I am using my corporate account, can some one help me to show the settings/permissions required to connect ADF CopyData to Fabric Data Lake house.

I tried, but not abl to connect the Fabric account from Azure account.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Nikhil,

Basically, i would like to read a .csv file from Azure Storage Container using ADF Pipeline and push the data into Fabric Data Lake house.

I followed the link How to ingest data into Fabric using the Azure Data Factory Copy activity - Microsoft Fabric | Micro... to do it. Looks like there is some problem in authentication.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

Thanks for providing the details.

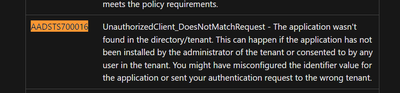

The error you’re encountering is related to Azure Active Directory authentication. Here’s a breakdown of the issue:

- Error Code: 27370

- Issue: Failure to obtain an access token using a service principal.

- Error Message: AADSTS700016

https://learn.microsoft.com/en-us/entra/identity-platform/reference-error-codes

Solutions:

-

Verify Application Registration:

- Access the Azure portal and sign in as a global administrator for the "DEVRT" directory.

- Navigate to Azure Active Directory>App registrations.

- Search for the application ID "86cdd993-a314-4221-8e6b-bb9a19e5ebc9".

- If the application isn't found, you'll need to register it in the "DEVRT" directory.

-

Check Consent (if applicable):

- If the application is found, ensure it's either a public application (doesn't require user consent) or has been consented to by a user with the necessary permissions in the "DEVRT" directory.

-

Confirm Tenant Name:

- Double-check the Azure AD tenant name you're using for authentication. It should be "DEVRT" in this case.

Please refer to these links for more help:

https://sendlayer.com/docs/error-aadsts700016-application-with-identifier-not-found-in-the-directory...

https://learn.microsoft.com/en-us/answers/questions/692461/message-aadsts700016-application-with-ide...

https://stackoverflow.com/questions/57324634/aadsts700016-application-with-identifier-some-id-was-no...

Hope this helps. Please let me know if you have any further issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the explanation.

Do you suggest any other method to transfer the data from ADF to Fabric lake house ? (Using ADF PipleLIne Copy data Activity or any other way ) ??

Please let me know.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

You can follow the below simple steps:

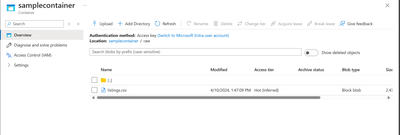

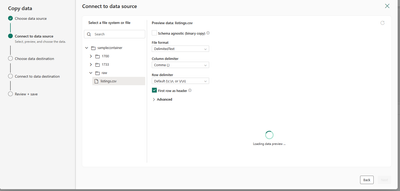

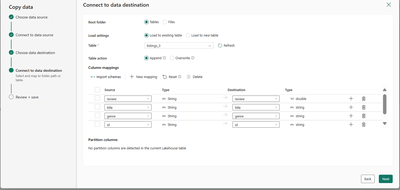

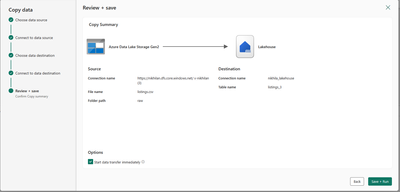

I have a CSV file named listings in the ADLS Gen2 storage container.

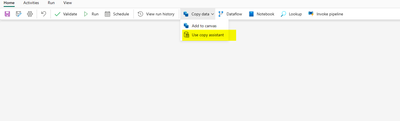

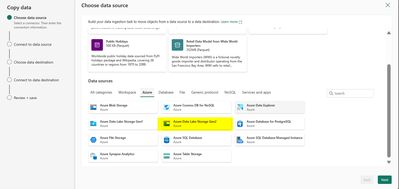

I have created a pipeline named Pipeline3 in Fabric workspace. You can use a Copy Assistant.

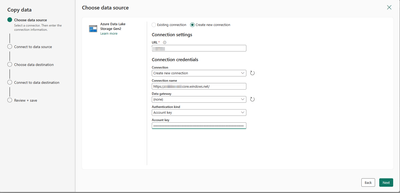

Create a new connection.

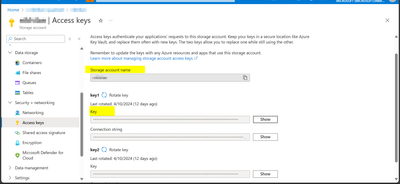

You can find the name and key in Access Keys. I have used Account Key as the authentication kind.

After the connection is successful select the required file.

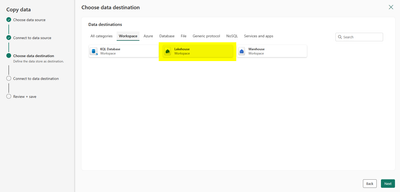

Select the destination as Lakehouse.

Click on save+run. The data gets loaded into the lakehouse table.

Hope this helps. Please let me know if you have any further questions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you again for your help.

I believe you are pulling the data from ADF using Fabric pipeline. But i would like to push the data from ADF to Fabric using ADF pipeline.

I have one more question, In ADF pipeline, if i use source as XML file in Copy Data Activity, In the preview data the XML format are converted to JSON format. Even if i mentioned source format as XML format.

How do i retain the XML format from Source to Destination in Copy Data ACtivity ??

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

For your first question, you can use Fabric pipelines as they have more convenient approach. Why do you want to use only ADF pipelines? Just asking to understand the scenario.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Nikhilan,

My requirement is convert a full xml file from a container and store the full xml file(retaining the xml format) in a single cell in data lake house table.

for example: If i have the xml file sample.xml as below

<?xml version="1.0"?> <catalog> <book id="bk101"> <author>Gambardella, Matthew</author> <title>XML Developer's Guide</title> </book> </catalog>

I have read the xml file and store it as below in data lake house. since ADF is more powerful than fabric, i thought of using ADF to Fabric Push mechanism.

| RowID | Updatedby | xmlContent |

1

| 393 | <?xml version="1.0"?> |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

Is the data getting converted to JSON in the destination table?

One possible workaround is you can use notebooks in Fabric for this scenario.

Please refer to this link: Solved: Spark XML does not work with pyspark - Microsoft Fabric Community

You can write the data in lakehouse table using Spark code in notebooks.

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

We haven’t heard from you on the last response and was just checking back to see if you have a resolution yet. Otherwise, will respond back with the more details and we will try to help.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

We haven’t heard from you on the last response and was just checking back to see if you have a resolution yet. Otherwise, will respond back with the more details and we will try to help.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ThiyagarajanLG

Thanks for using Fabric Community.

Why do you want to connect Azure Copy Data to Lakehouse? Are you referring to Copy Data Activity here? Apologies I could not understand the query. Can you please provide me some screenshots, so that I can understand better.

Thanks

Helpful resources

Fabric Monthly Update - April 2024

Check out the April 2024 Fabric update to learn about new features.

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

| User | Count |

|---|---|

| 6 | |

| 3 | |

| 2 | |

| 2 | |

| 2 |