FabCon is coming to Atlanta

Join us at FabCon Atlanta from March 16 - 20, 2026, for the ultimate Fabric, Power BI, AI and SQL community-led event. Save $200 with code FABCOMM.

Register now!- Power BI forums

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Data Stories Gallery

- Themes Gallery

- Contests Gallery

- QuickViz Gallery

- Quick Measures Gallery

- Visual Calculations Gallery

- Notebook Gallery

- Translytical Task Flow Gallery

- TMDL Gallery

- R Script Showcase

- Webinars and Video Gallery

- Ideas

- Custom Visuals Ideas (read-only)

- Issues

- Issues

- Events

- Upcoming Events

The Power BI Data Visualization World Championships is back! Get ahead of the game and start preparing now! Learn more

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- DAX Measure Optimization and Correction - Avg. SKU...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DAX Measure Optimization and Correction - Avg. SKUs per Invoice - Filter/RelatedTable/DistinctCount

Hello everyone! First of all thank you very much for taking the time to read this question, is is about both a DAX measure optimization and correction.

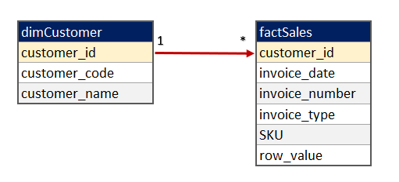

1. I have a data model that consist of two tables, dimCustomer and factSales. In reality dimCustomer has around 800K rows and factSales around 60M rows. It looks something like this

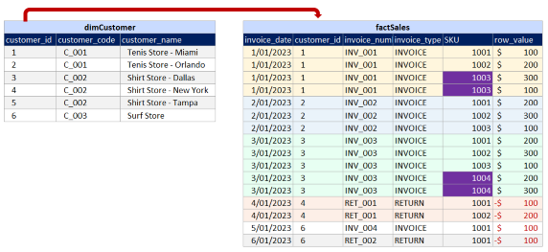

if we carefully look at the dimCustomer table, we can see that for each customer_code, I might have several customer_ids, this is because a customer_id is based on the different combinations of customer_code and customer_name, but in my company we focus on customer_code.

For the factSales table, it is transactional information of invoices and returns. Some invoices might have multiple lines for the same SKU (Purple Cells), this is because it was a gift or a different price for a certain amount of items, it just happens. (I can't group my fact table to have only one row per sku inside each invoice)

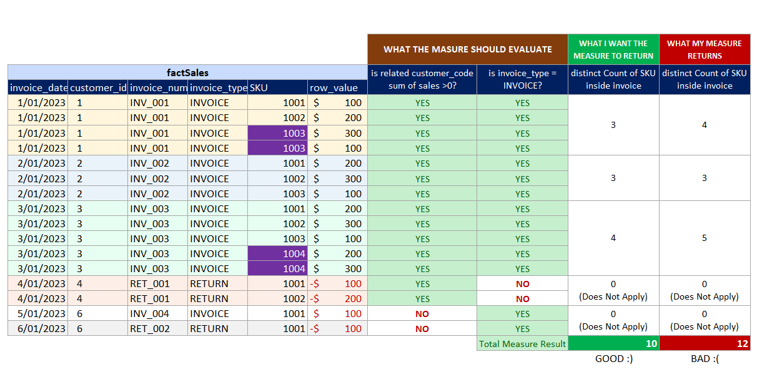

The KPI I'm trying get is the Average SKUs per Invoice. We have two business rules in my company: invoice_type = "INVOICE" and overall customer_code sum of sales >0, this means that if one customer_code sum of sales is 0 or lower because of returns in a certain period of time, I dont want to take him into account, nor count the SKUs he returned.

The calculation would be SUM of DISTINCT SKUs inside each invoice, divided by distinct count of invoices, (not leaving aside the two business rules)

The distinct invoices I have it, my problem is with the 'Sum of Distinct SKUs inside each invoice' part.

(I'm attaching a sample workbook with the data in the pictures and the loaded measures.)

I already have a measure that works, but with the whole dataset it is not performing well, it even shows an error of not enought resources to compute measure kind of type.

This is the working measure (Slow with whole dataset):

my idea is,

1. vTable0 = filter the fact table with the 4 fields I need, only invoice_type = "INVOICE" (Part that I guess is the most resource consuming)

2. vTable_1 = get a list of customer_code where sum of sales >0

3. vTable_2 = filter vTable0 based on the customer_code present in vTable_1

4. count rows of resulting vTable_2

Measure_Working_But_Slow =

VAR vTable_0 =

FILTER(

SUMMARIZE(

factSales,

dimCustomer[customer_code],

factSales[invoice_number],

factSales[invoice_type],

factSales[SKU]),

factSales[invoice_type]="INVOICE"

)

VAR vTable_1 =

FILTER(

SUMMARIZE(

dimCustomer,

dimCustomer[customer_code]

),

CALCULATE(SUM(factSales[row_value]))>0

)

VAR vTable_2 =

FILTER(

vTable_0,

dimCustomer[customer_code] IN vTable_1

)

RETURN

COUNTROWS(vTable_2)

This is the Not working measure (very close to the result and fast)

I got this idea from SQLBI relatedtable youtube video which performs like a charm. this is the idea

1. Filter customer_code based on sum of sales > 0

2. SUMX of countrows of related table where invoice_type = "INVOICE"

The error is that as in the same invoice we can have two rows with the same SKU, those two are counted, but I need to count only one row per unique SKU inside each invoice. So if the invoice has this phenomenon, the result wont be right.

Measure_Not_Working =

VAR vTable_0 =

FILTER(

VALUES(dimCustomer[customer_code]),

CALCULATE(SUM(factSales[row_value])) > 0

)

VAR vTable_1 =

SUMX(

vTable_0,

COUNTROWS(

FILTER(

RELATEDTABLE(factSales),

factSales[invoice_type] = "INVOICE"

)

)

)

RETURN vTable_1

This is kind of the logic that the measure has to follow to give the right result. For only customer codes that have a total sum of sales greater than 0 and for invoices which type is "INVOICE", we count distinct skus inside the invoice.

Also, the measure has to work in a linechart too. not only in card. (did some experiments with summarizecolumns that did not work for line charts)

I've wondered all around to see if there is a way to filter that related table before passing it to the count rows... That would be a good thing but I have not been able to figure out a way.

Thanks again for taking the time.!!!

PS: I just saw that i cant attach the pbix file directly as I am kind of new in the community.

WeTransfer link to download pbix file or xlsx file with tables

We Transfer Link (https://we.tl/t-lcrrUEigxJ)

or tables here:

customer_idcustomer_codecustomer_name

| 1 | C_001 | Tenis Store - Miami |

| 2 | C_001 | Tenis Store - Orlando |

| 3 | C_002 | Shirt Store - Dallas |

| 4 | C_002 | Shirt Store - New York |

| 5 | C_002 | Shirt Store - Tampa |

| 6 | C_003 | Surf Store |

invoice_datecustomer_idinvoice_numberinvoice_typeSKUrow_value

| 1/01/2023 | 1 | INV_001 | INVOICE | 1001 | 100 |

| 1/01/2023 | 1 | INV_001 | INVOICE | 1002 | 200 |

| 1/01/2023 | 1 | INV_001 | INVOICE | 1003 | 300 |

| 1/01/2023 | 1 | INV_001 | INVOICE | 1003 | 100 |

| 2/01/2023 | 2 | INV_002 | INVOICE | 1001 | 200 |

| 2/01/2023 | 2 | INV_002 | INVOICE | 1002 | 300 |

| 2/01/2023 | 2 | INV_002 | INVOICE | 1003 | 100 |

| 3/01/2023 | 3 | INV_003 | INVOICE | 1001 | 200 |

| 3/01/2023 | 3 | INV_003 | INVOICE | 1002 | 300 |

| 3/01/2023 | 3 | INV_003 | INVOICE | 1003 | 100 |

| 3/01/2023 | 3 | INV_003 | INVOICE | 1004 | 200 |

| 3/01/2023 | 3 | INV_003 | INVOICE | 1004 | 300 |

| 4/01/2023 | 4 | RET_001 | RETURN | 1001 | -100 |

| 4/01/2023 | 4 | RET_001 | RETURN | 1002 | -200 |

| 5/01/2023 | 6 | INV_004 | INVOICE | 1001 | 100 |

| 6/01/2023 | 6 | RET_002 | RETURN | 1001 | -100 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous

In your slow measure where your are defining the vTable_2 variable, would it make any difference to remove 'dimCustomer' from 'dimCustomer'[customer_code] ?

I'm not sure if would make a difference but maybe it's worth a try.

Proud to be a Super User!

daxformatter.com makes life EASIER!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the idea! Unfortunately, I tried both removing dimCustomer from dimCustomer[customer_code] in vTable_2 and factSales from factSales[invoice_type] in vTable_0 but performance is basically the same, for a filter applied on a lot of rows this is the result.

with table name = 37588 ms

vs

without table name 36548 ms.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous

If I think of anything else, I'll let you know. I'm going to watch the thread because I'd like to find out what other ideas might come up.

Good Luck

Proud to be a Super User!

daxformatter.com makes life EASIER!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you!! I will still work on it on my side and post if anything changes.

Helpful resources

Power BI Dataviz World Championships

The Power BI Data Visualization World Championships is back! Get ahead of the game and start preparing now!

Power BI Monthly Update - November 2025

Check out the November 2025 Power BI update to learn about new features.

| User | Count |

|---|---|

| 59 | |

| 46 | |

| 42 | |

| 23 | |

| 17 |

| User | Count |

|---|---|

| 190 | |

| 122 | |

| 96 | |

| 66 | |

| 47 |